Rain patters against the Seattle skyline as an AWS engineer hits ‘deploy’—suddenly, AI agents on Bedrock don’t just execute; they converse.

Stateful MCP client capabilities on Amazon Bedrock AgentCore Runtime flip the script on agent workflows. Developers have wrestled with stateless tools forever—your agent fires off a request, gets a response, done. But real work? That’s messy. Pauses for user nods, LLM-generated filler, progress ticks during marathons. Stateless setups choke on that. No more.

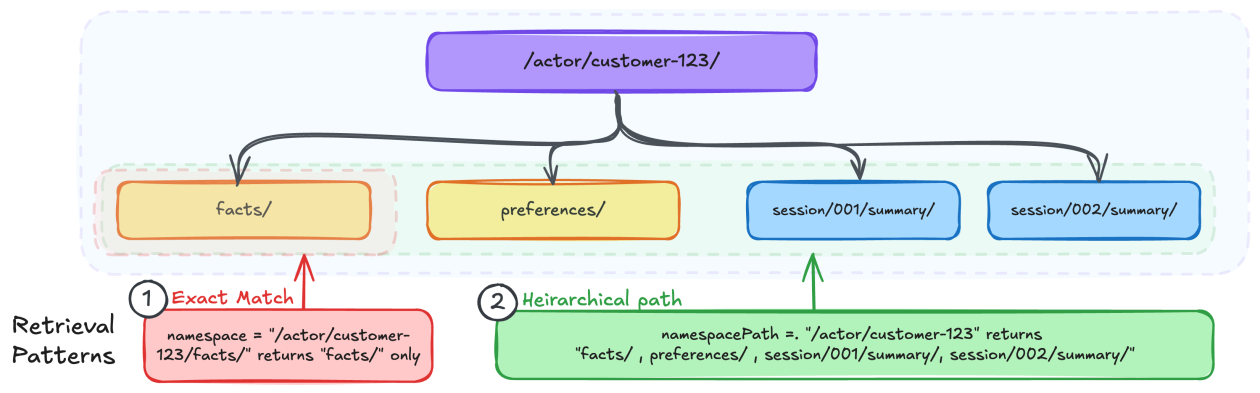

Here’s the thing. MCP—Model Context Protocol—is this open standard gluing LLMs to the outside world. Servers offer tools, prompts, resources. Clients? They push back with capabilities. Bedrock’s prior release nailed stateless servers. Now, stateful mode unleashes the full bidirectional dance: elicitation for user input, sampling for client-side LLM calls, progress notifications streaming live.

Stateful MCP client capabilities on Amazon Bedrock AgentCore Runtime now enable interactive, multi-turn agent workflows that were previously impossible with stateless implementations.

But—deep breath—how’d they architect this? Flip one flag: stateless_http=False. Boom. AgentCore spins up a microVM per session. Isolated CPU, memory, filesystem. Sessions last 8 hours, or 15 minutes idle before timeout. Route via Mcp-Session-Id header. Dead simple, yet profound. It’s like web dev’s dark ages: CGI scripts per request, no memory. Then persistent sessions arrived. Agents just got their upgrade.

Why Did Agents Need This Stateful Kick?

Stateless was fine for fire-and-forget tools. Input in, output out. Predictable. Scalable, even. But production agents? They’re not vending machines. They hit walls—need a user’s preference mid-flow, or an LLM’s creative spark without baking one into the server.

Elicitation shines here. Server pauses, fires a /elicitation/create with a message and JSON schema. Client pops a form: “Confirm this delete?” User accepts, declines, cancels. Structured. No guesswork.

Sampling? Server begs the client: /sampling/createMessage. Client’s LLM fills the blank—dynamic content, no server-side model tax.

Progress notifications stream updates. Long job? “Fetching data… 30% done.” Users stay hooked, not ghosted.

One punchy truth: without state, agents were half-baked. This completes the spec.

How Does Bedrock’s AgentCore Runtime Actually Do It?

Under the hood, it’s streamable-HTTP transport. Run mcp.run(transport=”streamable-http”, stateless_http=False). Clients declare capabilities in handshake. Server pings back as needed.

MicroVMs per session—AWS isolation magic. No shared state leaks. Expires? 404 on old ID, reinitialize. Clean.

| Stateless | Stateful |

|---|---|

| Simple tools | Multi-turn chats |

| No client caps | Elicitation, sampling, progress |

But here’s my unique angle, one AWS glosses over: this echoes the WebSocket revolution. HTTP/1.1 keep-alives killed the request-response ping-pong for chats. Bedrock’s doing that for agents. Prediction? In 18 months, stateful MCP becomes table stakes—enterprises ditch custom hacks, flock to Bedrock for agent fleets. Anthropic, watch your tooling lead shrink.

Can Stateful MCP Make Bedrock Agents Production-Ready?

Absolutely, if you’re building interactive workflows. Code example? Server mid-tool: await elicitation_create({“message”: “Budget?”, “schema”: {…}}). Client handles UI. Response flows back smoothly.

Long-running? Progress ticks keep trust high. Sampling offloads LLM smarts—server stays lean.

Skepticism check: 8-hour cap. Fine for most, but 24/7 ops? Stitch sessions or beg AWS for extensions. Still, it’s a leap. No more polling hacks or WebSocket sidecars.

And deployment? Zip to Bedrock, scales auto. Your stateful server lives in isolated glory.

Wander a sec: remember Lambda’s cold starts killing chatty apps? MicroVMs here warm up sessions, persist state. Architectural shift—agents as state machines, not functions.

What’s the Real Edge Over Competitors?

Anthropic’s got agent kits, but Bedrock bakes MCP native. Open spec, no lock-in. Stateful? They’re playing catch-up.

Critique the spin: AWS calls it “completes bidirectional.” Cute, but they led with stateless. Now fixing their own gap. Smart pivot.

For devs: build once, deploy anywhere MCP lives. Why? Protocol’s open—your Bedrock server ports to others.

Short version: agents evolve. From robots to partners.

Why Does Stateful MCP Matter for Your Next Agent?

Because pausing for humans—or LLMs—unlocks complexity. Travel agent? “Allergy?” mid-booking. Code gen? Sample docstrings on fly. Ops? Progress on deploys.

It’s the ‘how’ of agent maturity. Stateless: toys. Stateful: tools.

🧬 Related Insights

- Read more: DenseNet’s Dense Connections: Why They Outsmarted ResNet on Efficiency

- Read more: UK Publishers’ Make it Fair Blitz Targets AI Scraping Menace

Frequently Asked Questions

What are stateful MCP client capabilities on Amazon Bedrock?

They let MCP servers on AgentCore Runtime request user input (elicitation), LLM generations (sampling), and send progress updates during multi-turn workflows—fixing stateless limits.

How do you enable stateful MCP on Bedrock AgentCore?

Set stateless_http=False in mcp.run(), deploy to runtime. Clients get session IDs for persistence up to 8 hours.

Does stateful MCP replace stateless agent tools?

No—use stateless for simple calls, stateful for interactive ones. Both shine in their lanes.