In the past nine months, every AI lab dead serious about developers has shelled out for their own devtools. Google DeepMind kicked it off with the Antigravity team last July. Anthropic followed with Bun in December. And yesterday? OpenAI sealed the deal on Astral.

Boom. That’s your wake-up stat — a full-court press on Python’s beating heart.

Look, if AI’s the new electricity — as Andrew Ng loves to say — then devtools are the wiring. Without uv, ruff, and ty from Astral, your frontier models choke on slow package installs and linting hell. OpenAI didn’t just buy a team; they bought the plumbing that makes agentic coding fly.

Why Are AI Labs Suddenly DevTools Obsessed?

Here’s the thing. Coding isn’t a side hustle anymore. It’s the rocket fuel. Fidji Simo at OpenAI just axed “side quests” like shopping — Walmart’s conversion rates tanked to a measly third of click-outs — to laser-focus on enterprise alliances and, yep, coding via Astral. They’re even mashing ChatGPT and Codex into one superapp. We called that play years ago, but damn, it’s accelerating.

“Astral—the team behind uv, ruff, and ty—is joining OpenAI’s Codex team,” @charliermarsh announced, with @gdb confirming from OpenAI’s side.

That quote? Pure fire. uv alone is Python’s fastest package manager — 10-100x faster installs. Ruff? A linter that smokes everything else. These aren’t toys; they’re the difference between an agent that hallucinates imports and one that ships production code.

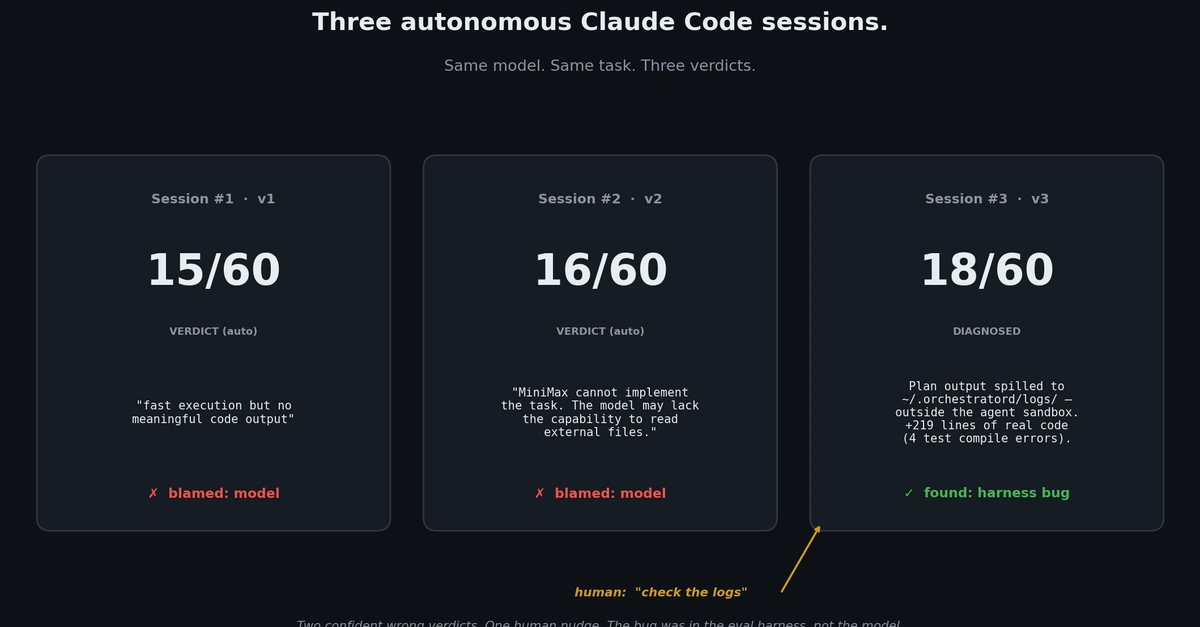

But wait — Anthropic’s not sleeping. They beefed up Claude Code with messaging channels for ambient access. No more API ping-pong; developers chat via Slack or whatever, agents hum in the background. Persistent workflows. That’s the moat.

And Cursor? Their Composer 2 dropped with frontier-class perf at $0.50 per million input tokens — benchmarks hitting 73.7 on SWE-bench Multilingual. A 40-person team, RL-trained across global clusters. The world’s converging on agent-native UIs, like Theo said.

Short para punch: This is recursive magic. Agents write code that trains better agents.

We nailed the “1+2=3” thesis three years back in Rise of the AI Engineer — LLMs plus software equals superpowers — but undersold how agentic coding loops back to model improvement. Claude Code, MiniMax 2.7: all screaming it. AIs engineering AIs. Until we can’t tell the humans from the machines.

My unique spin? Remember the 90s browser wars — Netscape vs. IE? Microsoft bundled everything into Windows, owned the stack, crushed souls. This is AI’s browser war, but for IDEs. Labs aren’t building models in a vacuum; they’re forging the agent OS. LangChain’s Fleet for agent fleets, Cognition’s Devin teams in VMs, AgentUI juggling specialists. Forget single agents; it’s managed runtimes, credential vaults, audit trails. The center of gravity? Fleets of AIs, orchestrated like an OS kernel.

Yuval nailed it: agents alone are dead abstractions. We need allocation, resources, contexts. Enterprise control planes incoming — because who trusts a rogue Devin with your AWS keys?

Can Developers Ride This Wave or Get Swept Under?

Energy here. Pace yourself — this shift’s exhilarating. Imagine: your IDE isn’t passive anymore. It’s alive, pretraining on your repos, RL-finetuning for your stack. Cursor’s doing it. OpenAI will with Astral’s tools baked in.

Critique time — OpenAI’s PR spins this as “unifying apps,” but it’s deeper. Dropping shopping? Smart, brutal. Retail’s a distraction when coders build empires. Fidji’s prioritizing Frontier Alliances — think Salesforce, not Walmart.

Bold prediction: By 2026, 80% of new software ships via AI agents, not human keystrokes. We’ll look back at manual coding like we do punch cards. And the lab owning the dev stack? They’ll print the money.

Wander a sec — Twitter’s buzzing. Simon Willison geeking on Python tooling moats. Kimmonismus hyping Cursor’s price/perf. It’s not hype; it’s momentum. But devs, adapt fast. Agentic coding’s 1/3 of AI engineering now — per our old summits — and climbing.

One sentence wonder: Resistance is futile; join the agents.

Dense dive: LangSmith Fleet nails enterprise needs — memory, tools, permissions, Slack hooks. Devin decomposes tasks to parallel Devins in sandboxes. Hrishioa warns of long-horizon work needing real infra. lvwerra’s AgentUI? Code-search-multimodal symphony. The stack’s maturing, from single hacks to OS-level.

The Agent OS Dawn — Or Just Hype Overload?

Skepticism check — is this corporate fever dream? Nah. Backdrop: OpenClaw, gpt-oss, Whisper in OpenAI’s OSS arsenal. Astral fits like a glove. GDM’s Antigravity? Stealth infra play. Bun? JS speed demon for Anthropic.

Historical parallel — my insight: Like Apple hoarding ARM chip design in the 2000s, ditching Intel for control. AI labs ditching commoditized APIs for owned tooling. Vertical integration 2.0. They’ll train on their agents’ code, iterate privately, lap the field.

Wonder hits: Picture a world where software blooms exponentially. AIs as engineers — tireless, recursive, brilliant. We’re not losing jobs; we’re gaining gods in the machine.

🧬 Related Insights

- Read more: Gemma 4’s 2 Million Downloads: Local AI’s Sneaky Takeover Begins

- Read more: Claude Code Agents in Parallel: Worktrees End the Waiting Game

Frequently Asked Questions

What is OpenAI buying with Astral?

Astral brings uv (blazing package manager), ruff (ultra-fast linter), and ty — core Python speed-ups now fueling OpenAI’s Codex push for agentic supremacy.

Why did Anthropic acquire Bun?

Bun’s a zippy JS runtime; it supercharges Claude Code’s workflows, letting agents run ambiently without slowdowns — key for dev moats.

Will AI devtools replace human coders?

Not yet — but they’ll amplify. By 2026, expect 80% of routine code agent-written; humans orchestrate the visions.