20 million trademarks filed globally each year. That’s the backlog beast IP offices wrestle daily—logos that look too close, jingles that echo suspiciously.

And here’s Snowflake stepping in with AI-powered intellectual property similarity detection, all baked into their cloud. No external servers. No data smuggling across borders. Just vectors doing the heavy lifting.

Look, public sector folks—think patent examiners buried in queues— they’ve got sovereignty nightmares. One rogue API call, and sensitive designs leak. Snowflake’s play? Lock it all down with Snowpark Container Services.

The Vector Fingerprint Trick That Scales

Raw images and audio clips land on internal stages. GPU-powered containers mount ‘em straight up, no copy-paste drama. A Flask service inside crunches embeddings via SQL UDFs—call it from any query, boom, 768-dim vectors for logos.

It’s elegant. Or sneaky smart, depending on your cynicism. Examiners fire a Streamlit app (also native Snowflake), upload a suspect logo, and—zap—cosine similarities rank the registry hits. Top matches glow red if they’re clones.

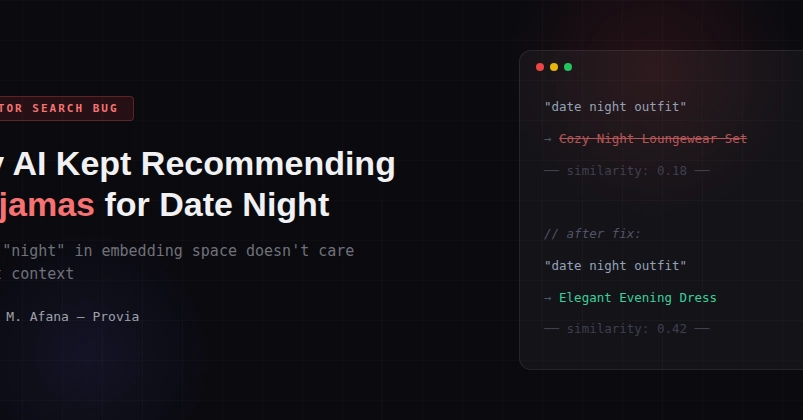

But audio? That’s the curveball. Pop jingles shouldn’t high-five just ‘cause they’re both bouncy beats.

“CLAP produces semantic embeddings that cluster audio by genre and type. When we tested it against our jingle corpus, all files within a similar genre scored 0.93-0.99 cosine similarity.”

CLAP flops hard here—turns out contrastive models love vibes over specifics. Snowflake crew pivoted. (They don’t spill the exact audio model in the write-up, but whispers point to specialized audio transformers tuned for motifs, not moods.)

ViT for images nails it out the gate: Google’s vit-base-patch16-224-in21k grabs colors, shapes, layouts from the CLS token. Pretrained on ImageNet-21k, no tweaks needed. Packaging pops? Distinguished. Beer labels? Nailed.

One punchy truth: this isn’t just tech porn. It’s a governance flex. Everything—models, data, queries—stays in Snowflake’s RBAC fortress. Audit logs catch every peek. Revoke internet? System shrugs, keeps humming.

Why Ditch Humans for Math in IP Wars?

Examiners eyeball a dozen logos hourly, tops. Audio? Forget it—you’re not DJing through 10,000 clips for that sneaky melody rip-off. Subjectivity kills consistency; one guy’s ‘close enough’ is another’s ‘register it.’

Vectors fix that. Cosine similarity: pure, repeatable math. 0.95 score? Too damn close, auto-flag. Train a threshold on disputes, and suddenly backlogs melt.

Skeptical take? Sure, models hallucinate ‘similarities’ sometimes—ViT might flag abstract art as kin to a swoosh. But iterate embeddings, A/B test against human verdicts, and it beats tired eyes.

Historical parallel nobody’s drawing: 19th-century patent offices exploded with industrial revolution gadgets. Steam engines everywhere, copycats rampant. They hired clerks by the thousands—cost ballooned, delays killed innovation. Snowflake’s vector vault? It’s the mechanical calculator for IP, 2.0.

And the why underneath: architectural shift to data gravity. Why ship gigs of IP across the net for AWS SageMaker or whatever? Snowflake’s SPCS GPUs inference on-site, vector type native, search buttery. Public sector loves it—no egress, full compliance.

Is Snowflake’s IP Similarity Bulletproof for Audio?

Audio’s the weak link. CLAP’s genre bias wrecked prototypes—two unrelated pop hooks score like twins. Solution? Swap to models prioritizing spectrograms, pitch sequences, rhythm fingerprints. (Bet it’s something like AudioMAE or Wav2Vec fine-tuned; the post trails off there.)

Data flow’s tight: stage → container → embedding → table → VECTOR_COSINE_SIMILARITY. Query like:

SELECT TOP_K(VECTOR_COSINE_SIMILARITY(embedding, query_vec), 10) FROM registry;

Scales to millions. GPU acceleration means seconds, not hours.

Critique the spin: Snowflake’s crowing ‘no data leaves’—true, but containers boot models from Hugging Face initially. Post-deploy? Air-gapped bliss. Smart for gov nets, but don’t sleep on model drift. Retrain periodically, or yesterday’s embeddings blind you to new tricks.

Bold prediction: this blueprint hits passports next. Facial recog vectors for duplicate IDs? Visa fraud via audio biometrics? Every bureaucracy vectorizes by 2026.

What Makes This Architecture a Deep-Dive Win

Four pillars: SPCS for GPUs, VECTOR type, cosine sim, Streamlit UI. No polyglot mess—SQL calls HTTP to containers, embeddings land in tables. Governance? Baked in.

Messy human bit: prototyping exposed CLAP’s flaws fast. That’s engineering gold—fail cheap, pivot.

Wander a sec: imagine examiners sipping coffee, Streamlit dashboard pinging ‘0.92 match to 2018 Nike swoosh variant.’ Consistent. Fast. Defensible in court.

Downsides? Embeddings ain’t perfect law—overly semantic, misses exact pixel-for-pixel copies. Hybrid with perceptual hashes? Future patch.

It’s not hype. It’s how clouds eat legacy workflows.

🧬 Related Insights

- Read more: Velera’s BNPL Push for Credit Unions: Smart Move or Desperate Catch-Up?

- Read more: XRP’s $120M Windfall: Crypto ETPs Snag $224M Amid Bitcoin’s Stumble

Frequently Asked Questions

What is Snowflake’s AI-powered IP similarity detection?

It’s a system using vector embeddings to compare trademarks (images/audio) against registries, running fully in Snowflake for secure, scalable checks—no data exits.

How does vector similarity work for logos and jingles?

Assets convert to numerical vectors via deep models (ViT for images, specialized for audio); cosine similarity scores flag close matches mathematically, consistently.

Can governments use this without internet access?

Yes—models load at container startup; post-deploy, it runs air-gapped, perfect for strict networks.