Subtitles matter. More than you think.

And here’s AI-Mimi — Japan’s hybrid AI trick for slapping subtitles on live TV, aimed square at Deaf and hard-of-hearing folks, but let’s be real, everyone loves ‘em now. I’ve covered tech accessibility for two decades, from clunky early captioning boxes to today’s AI hype, and this one’s got me half-impressed, half-wary. Over in Japan, 360,000 Deaf or hard-of-hearing people need this, plus 14 million seniors with hearing issues. Local stations? They’re drowning in costs — tens of millions of yen for gear and staff per setup. No wonder over 100 channels skip live subs.

SI-com and parent ISCEC Japan kicked off pilots in 2018, teaming human typists with Microsoft Azure Cognitive Services. It’s not pure AI magic; humans tweak for accuracy, speeding things up and nailing Japanese nuances sign language users might miss. They even won a Microsoft AI for Accessibility grant — nice badge, but grants don’t pay bills.

Why Local TV Stations in Japan Despise Traditional Subtitling

Look, major broadcasters manage subs, but locals? Forget it. Equipment alone costs a fortune, and personnel shortages kill live shows. Muneya Ichise from SI-com nails it:

“Over 100 local TV channels in Japan face barriers in providing subtitles for live programs due to the high cost of equipment and limitations of personnel.”

That’s community lifeline stuff — local news on disasters, festivals, school boards. Without subs, huge chunks of viewers tune out. Aging Japan (30% over 65) amplifies this; it’s not charity, it’s smart business to keep eyes glued.

AI-Mimi flips the script. Piloted at Okinawa University, demoed live in Nagasaki December 2021. Big fonts, 10 lines on the right side — not that puny bottom bar everyone hates. Deaf community rated it high; stations love the no-hardware freedom.

But.

Here’s my unique angle, one you won’t find in the press release glow: this echoes the 1970s U.S. closed-captioning rollout. Back then, decoding chips were luxury add-ons; only big networks afforded them, locals languished. NTSC standards forced hacks, accessibility stalled for years. AI-Mimi? It’s the cheap decoder chip of our era — hybrid smarts bypassing hardware hell. Bold prediction: if accuracy holds in typhoon chaos or dialect-heavy rural news, we’ll see this exported to India, Brazil, anywhere local TV scrapes by.

Is AI-Mimi Accurate Enough for Live Chaos?

Skeptical vet hat on. Azure’s speech-to-text shines in demos, but live TV? Slurred words, accents, background noise — AI stumbles there. Humans compensate, sure, but ISCEC’s “specialized personnel” pool has limits. Scale to 100+ stations? They’ll need an army. Cost-efficient now, maybe, but who’s footing the human bill long-term? SI-com makes money here, Azure rakes cloud fees, Microsoft gets PR. Viewers win, but follow the yen.

They iterated on feedback — bigger text, better placement. December demo got rave reviews from Deaf users, confirming needs met. Ichise again:

“We are so surprised that we got positive feedback from so many people, commending the tech innovation that plays an important role in promoting the use of subtitles across all live broadcasts and ensuring accessible experiences for the communities.”

Surprised? C’mon, users beg for this. PR spin calls it “innovative,” but it’s pragmatic engineering — humans + AI, not sci-fi.

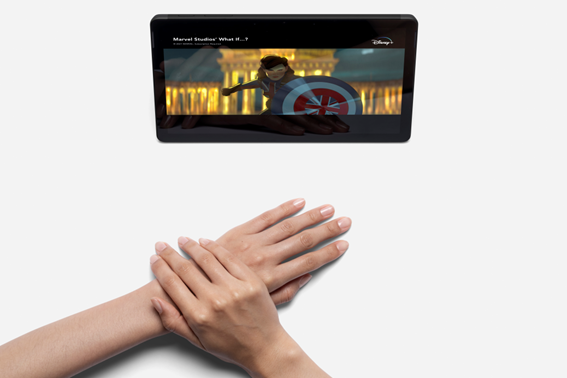

Global context seals it. UK BBC: 10% regular subtitlers, 35% online, most hearing folks. Japan mirrors that; subs boost all viewers, especially streamers. Social media videos? Same demand. AI-Mimi’s not alone — think Google’s Live Caption, but tailored, hybrid, local.

Can AI-Mimi Spread Beyond Japan?

Short answer: probably, if costs stay low. But cynicism kicks in — Microsoft’s involved, so expect Azure lock-in. Open-source alternatives? Nah, this is proprietary sauce. Historical parallel bites back: early caption tech got commoditized; will AI-Mimi? Doubt it soon. Stations gain flexibility, no capex; communities get news access. Win-win, till the first big flub — mis-subbed earthquake alert, say.

Tested extensively, user-approved. Pilots prove viability. Yet, 20 years in Valley trenches teach: pilots dazzle, production humbles. Who’s actually making money? ISCEC scales services, Azure bills cycles. Deaf win indirect.

Japan’s TV ecosystem — NHK leads, locals fill gaps. Subs mandatory-ish for majors, optional pain for rest. AI-Mimi democratizes. Imagine U.S. PBS affiliates, rural India channels adopting. But accuracy in pidgin English or Hindi dialects? Hybrid scales if outsourced typists globalize.

Deeper dive: sign language subset (70k users) still needs interpreters, but written Japanese covers most. 10-line right-side display? Genius for dense info, less screen-block. Feedback loops refined it — real users, not lab rats.

🧬 Related Insights

- Read more: Google’s Veo 3.1 Lite Slashes Video AI Costs in Half – A Game for Indie Devs

- Read more: Claude Code Grabs 4% of GitHub Commits as AI Coding Arms Race Explodes

Frequently Asked Questions

What is AI-Mimi and how does it work?

AI-Mimi pairs Microsoft Azure AI speech recognition with human editors for real-time subtitles on live Japanese TV, slashing costs for local stations.

Will AI-Mimi subtitles work for all hearing-impaired viewers in Japan?

It targets written Japanese prefs (most hard-of-hearing), with big fonts and optimal placement; sign users may still need interpreters.

Is AI-Mimi available outside Japan?

Piloted there now, but hybrid model could adapt globally if Azure handles local languages.