Biology’s transformer revolution is here.

Training mRNA language models across 25 species—that’s the feat OpenMed just pulled off for a mere $165. Picture this: you’re dreaming up a new therapeutic protein, and by afternoon, you’ve got codon-optimized DNA ready for the lab. No hand-wavy rules of thumb. No proprietary black boxes. Just pure AI, slurping up natural sequences, learning biases species by species, spitting out optimized code that cells actually express.

It’s like giving biology the same cheat code that turned text prediction into ChatGPT. Transformers conquered words; now they’re cracking life’s genetic script. And OpenMed didn’t just theorize—they built it, tested it, open-sourced it.

What OpenMed Actually Built

Three stages, chained end-to-end. First, ESMFold predicts the protein’s 3D fold—Meta’s battle-tested model, averaging 0.79 pLDDT on 30 chains. Solid, batchable pipeline there. Then, ProteinMPNN from Baker Lab designs amino acid sequences that fold right into scaffolds like 7K00, hitting 42% recovery. Established tools, but here’s the magic: their custom mRNA optimization.

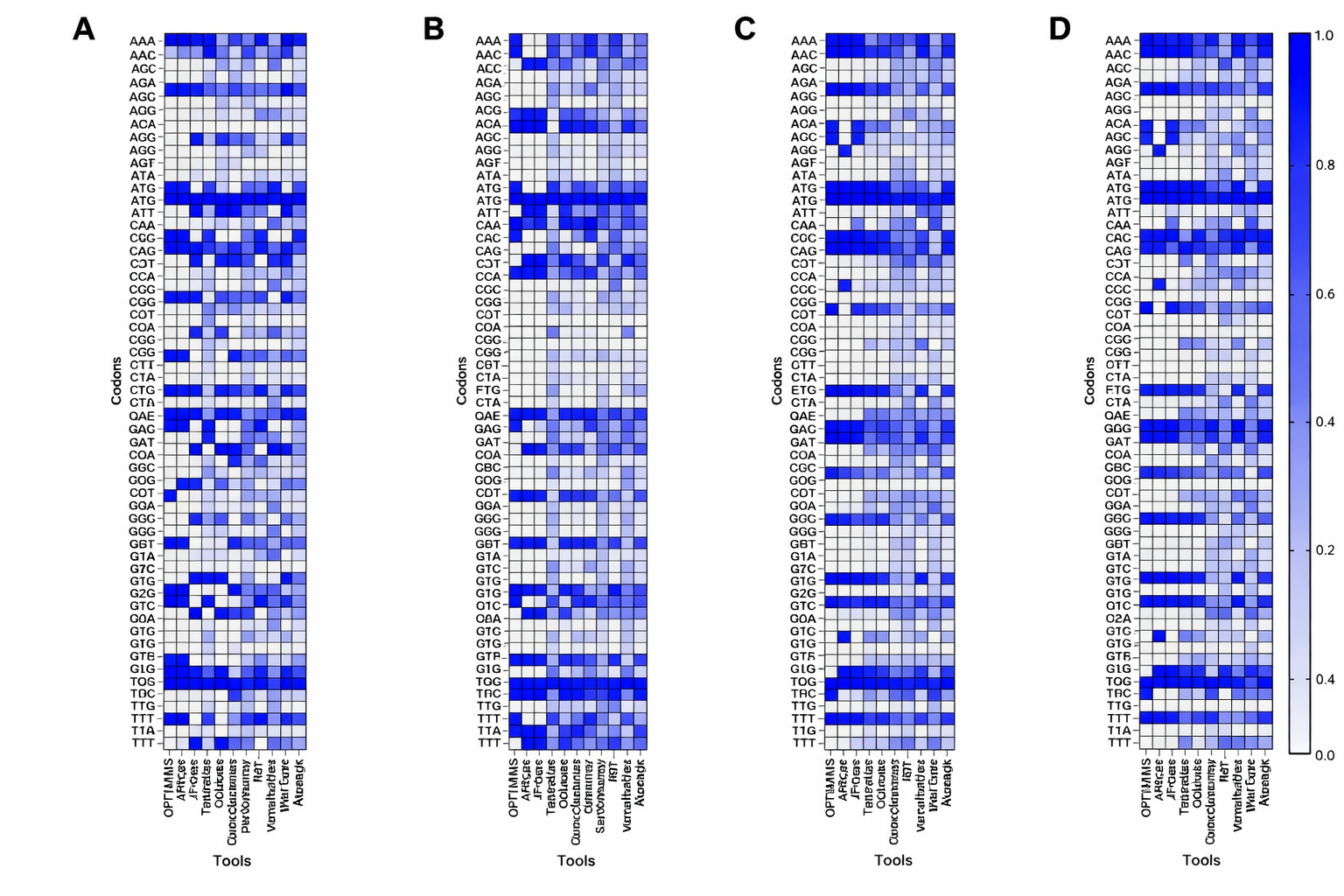

CodonRoBERTa-large-v2. Perplexity 4.10. Spearman CAI correlation 0.40. Crushes ModernBERT. Trained on 250k coding sequences, then scaled to 381k across 25 species in 55 GPU-hours. Four production models. Species-conditioned. No one else in open-source does this.

Imagine going from a therapeutic protein concept to a synthesis-ready, codon-optimized DNA sequence in an afternoon.

That’s straight from their post. And they delivered.

But wait—why codons? Amino acids are the what; codons are the how. Sixty-four triplets from ACGT, degenerate as hell—one protein, gazillions of DNAs. Wrong codons? Your cell chokes, expresses 100x less. Pfizer’s COVID shot nailed human codons. OpenMed’s models learn this from data, not tables.

Transformers for Codons: BERT vs. RoBERTa Throwdown

Codons aren’t sentences. Triplets, positional quirks, species quirks. BERT-tiny baseline? Meh, 6M params. ModernBERT-base (90M, RoPE for long context)? Efficient, but nah. CodonRoBERTa-base (92M)? Winner. They iterated ruthlessly—architectures, hyperparameters—landing on large-v2 as champ.

Here’s my hot take, absent from their write-up: this mirrors NLP’s early days. Remember when RoBERTa edged BERT, paving ESM’s path? Same playbook. Biology’s getting its fine-tune frenzy. Bold prediction: in two years, AI codon models design vaccines faster than wet labs. We’re not tweaking genomes anymore; we’re prompting them.

Surprises? RoBERTa thrived despite no modern bells. Efficiency ruled. And scaling multi-species? Trivial compute, massive unlock—E. coli to human, one system.

The pipeline ties it. Input: protein concept. Output: DNA fasta. Runnable code on GitHub. Transparent flops, full evals. Not hype. Build log.

Why Multi-Species Matters—And Costs Pennies

Twenty-five organisms. Bacteria. Yeast. Mammals. One conditioned model suite. Train separate, condition on species token. Perplexity holds. CAI soars.

Cost? 55 A100 GPU-hours. $165. That’s lunch for a team, not a project. OpenMed calls out the barrier drop—anyone with Colab can iterate now.

Look, Big Pharma hoards codon tools. This democratizes. Indie biohackers designing therapeutics? Incoming. (Though FDA won’t love garage mRNA just yet.)

And the workflow? Dockerized. ESMFold → ProteinMPNN → CodonRoBERTa → synthesis file. Afternoon turnaround.

Is This Biotech’s iPhone Moment?

Short answer: yes. Proteins were artisanal. Now? Programmable. Like apps on iOS—structure predicts, sequences generate, codons optimize. Stack ‘em.

OpenMed’s not first—AlphaFold kicked it off—but end-to-end, multi-species, open? Fresh. Their transparency slays corporate spin. No “state-of-the-art” fluff. Just numbers, code, “here’s what bombed.”

Critique time: folding and design lean on others. Fair—stand on giants. But codon work? Original. Next? Diffusion for sequences? Multi-modal bio?

What’s next mirrors Part I’s survey. ESMFold, ProteinMPNN evolve. But codon LLMs? They’ll fold in evolution sims, expression predictors. Exponential.

The Real Edge: From Hype to Hackable

Energy here isn’t PR polish. It’s runnable truth. They wandered—BERT to RoBERTa—landed better. That’s science.

Unique angle: this isn’t just tools. It’s platform. Like PyTorch for proteins. Fork, fine-tune per species, deploy. Biotech shifts from PhD-months to prompt-seconds.

Wonder hits: imagine AI dreaming proteins for rare diseases, auto-optimizing for your cell line. $165 today; pennies tomorrow.

🧬 Related Insights

- Read more: AI’s Cyber-Hacking Sprint Accelerates – While Supercharging Startup Hustles

- Read more: OpenAI’s Bug Bounty Arms Hackers Against Its Own AI Nightmares

Frequently Asked Questions

What is CodonRoBERTa and how does it work?

CodonRoBERTa-large-v2 is a RoBERTa transformer trained on codon sequences, predicting optimal DNA for protein expression. It beats baselines on perplexity (4.10) and CAI (0.40), conditioned for 25 species.

How much does it cost to train mRNA language models like this?

OpenMed trained four multi-species models in 55 GPU-hours for $165 on A100s. Scalable to anyone’s cloud budget.

Will OpenMed’s pipeline speed up drug discovery?

Absolutely—end-to-end from protein concept to DNA in hours, open-source. Democratizes optimization for therapies, vaccines.