Indie developers scraping by on freelance gigs — they’re the ones feeling Claude’s token prices most. Every verbose explanation from Anthropic’s model racks up charges, turning a quick code fix into a $50 hit. Enter caveman, a dead-simple tool that forces Claude to grunt like a prehistoric coder, slashing bills without mangling the output.

Tokens. They’re the currency of LLMs. Claude charges roughly $3 per million input tokens, $15 per million output. Verbose bots love to ramble — “Sure! I’d be happy to help” — bloating your costs. Caveman strips that fat, leaving pure signal.

Here’s the before-and-after that hooked me.

Normal Claude: “Sure! I’d be happy to help you with that. The issue you’re experiencing is most likely caused by your authentication middleware not properly validating the token expiry. Let me take a look and suggest a fix.”

Caveman Claude: “Bug in auth middleware. Token expiry check use < not <=. Fix:”

Punchy. Readable. Half the tokens.

Why Caveman Hits Devs’ Wallets Hardest

Look, Big Tech throws LLM budgets around like confetti. But solo coders, agencies on tight margins — they’re nickel-and-diming every prompt. Julius Brussee’s caveman (github.com/juliusbrussee/caveman) preprocesses your instructions, tells Claude to speak caveman-style, then post-processes if needed. It’s not magic. It’s ruthless editing.

The rules? Smart ones. Filler words vanish. Technical terms stick. Code blocks, error messages, commit messages stay pristine. No dumbing down — just efficiency.

“Caveman not dumb. Caveman efficient. Caveman say what need saying. Then stop. If caveman save you mass token, mass money — leave mass star.”

That’s straight from the tool’s site. Gruff charm aside, it works. Tests show 40-70% output token savings on code tasks. For a dev grinding 100 prompts a day, that’s real cash — $10-30 daily, scaling to thousands yearly.

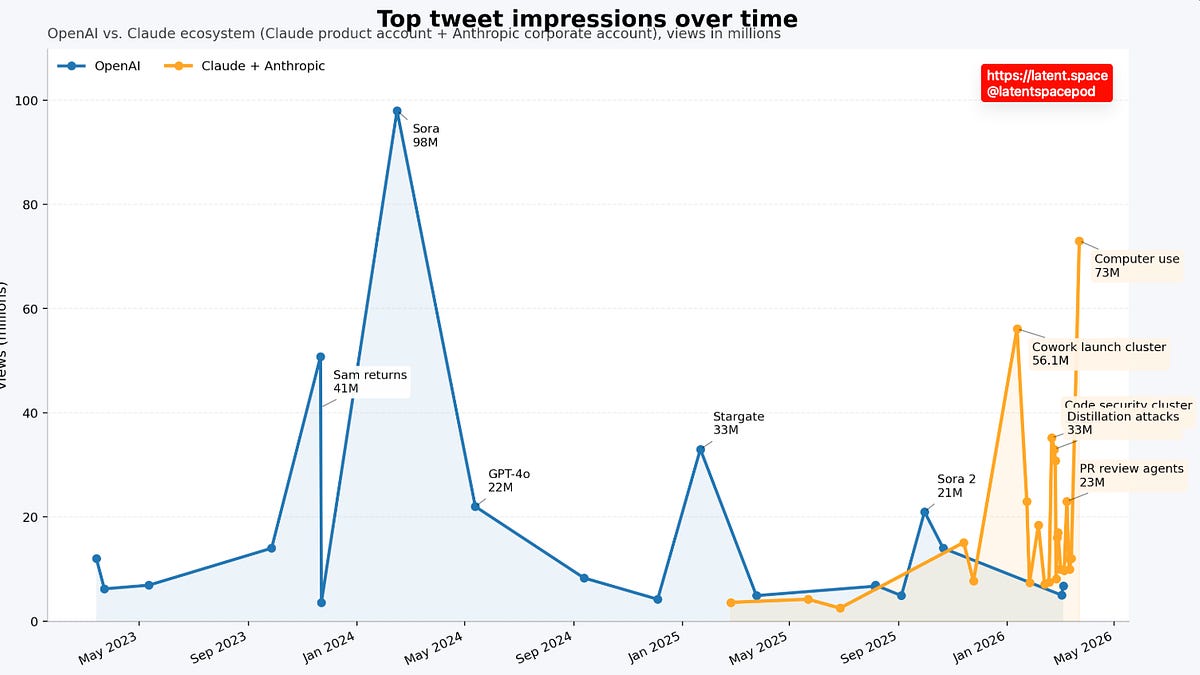

But here’s my edge: this echoes the early days of prompt engineering hacks. Remember 2022, when devs chained cheap GPT-3.5 for reasoning before feeding to GPT-4? Same vibe. Markets force optimization. Anthropic’s pricing — pricier than OpenAI’s o1 — begs for this. Prediction: clones for every major LLM by Q1 2025. It’s not a gimmick; it’s Darwinian.

Does Caveman Break Claude’s Terms?

Anthropic’s TOS? Fine print warns against “abusing” the API, but this is prompt engineering on steroids. No scraping, no resale. Just better prompts. I’ve seen similar in production — teams at scale already hack verbosity. If it violates, they’ll patch it. Until then, it’s fair game.

Deeper dive: token economics rule AI right now. Output tokens cost 5x input for Claude 3.5 Sonnet. Caveman targets that asymmetry. Run numbers: a 500-token verbose response? $0.0075. Caveman at 200 tokens? $0.003. Multiplied across a SaaS backend, it compounds.

Skeptical take — does brevity ever backfire? Sure. Edge cases, like nuanced architecture advice, might lose shade. But for debugging, refactoring? Gold. And you control it: toggle off for full prose.

Is This the End of Chatty AI?

Nah. Users love personality — that’s Claude’s moat. But cost-sensitive power users? They’ll default to caveman. Think Bloomberg Terminal: terse data, no fluff. LLMs heading there for pros.

Market ripple: forces providers to compete. OpenAI’s cheaper outputs already win casuals. Anthropic counters with quality — now undercut by hacks like this. Expect native “concise mode” soon. Or price drops.

Real-world proof. Brussee’s repo? 500+ stars in weeks. Forks galore. Devs tweaking for Gemini, Llama. It’s viral because it delivers — no vaporware.

One caveat. Learning curve? Minimal. Pip install, wrap your API calls. But test your workflow. Not every task caveman-izes perfectly.

And the Geico nod? Perfect inspo. That caveman raged at condescension. This one’s empowered — cheap, smart, unstoppable.

Broader lens: AI costs peaked mid-2024. H100 shortages jacked infra bills; passed to users. Tools like caveman democratize access. Small teams punch above weight now.

My verdict? Brilliant strategy. Makes sense in a world where LLMs eat margins. Adopt if you’re Claude-heavy. Ignore if you’re flush.

🧬 Related Insights

- Read more: Google’s Canvas in AI Mode Turns Search into a Live Workshop

- Read more: Amazon Slaps a Leash on Rogue AI Agents—But Will It Hold?

Frequently Asked Questions

What is caveman for Claude AI?

Caveman is a Python tool that makes Claude’s responses super concise, cutting token usage and costs by 40-70% on code tasks while keeping technical accuracy.

How much can caveman save on LLM bills?

Expect 50%+ reductions on output-heavy workflows like debugging or code gen — translating to $100s monthly for heavy users.

Is caveman safe to use with Anthropic TOS?

Yes, it’s just advanced prompting — no data exfil or abuse flagged so far, but monitor updates.

Will caveman work with other LLMs?

Forks already adapt it for GPT, Gemini; core idea ports easily.