Picture this: it’s 2 a.m., pager screaming, and your entire AWS fleet’s S3 buckets are wide open to the internet because some IaC template slipped through review.

That’s not hyperbole. Happened to more teams than they’ll admit.

And here’s Infrastructure as Code with LLMs swooping in like the savior – or so the pitch goes. Generate controls, review code, enforce policies. Sounds slick. But I’ve been kicking tires in Silicon Valley for two decades, and every time Big Tech dangles ‘AI magic’ at devs, I ask: who’s actually cashing the checks here?

IaC flipped the script on cloud ops, sure. Treat servers like software. Git it up, version it, deploy consistently. No more snowflake servers from harried admins. Costs drop when you auto-spin down idle junk. Repeatable environments? Gold for CI/CD pipelines.

But.

That blast radius. One typo in a Terraform file, and boom – prod’s compromised. Remember Capital One’s S3 breach? IaC made it scalable stupid.

Why IaC Still Gives Me Nightmares

Risks pile up fast. Overly permissive IAM roles. Unencrypted EBS volumes. Firewall holes you could drive a truck through. Manual reviews? Laughable at scale. Teams drown in PRs, miss the gotchas.

Organizations preach ‘shift left’ – bake in security early. Tools like Checkov or tfsec help, but they’re rigid. Miss context. Can’t reason like a human (flawed as we are).

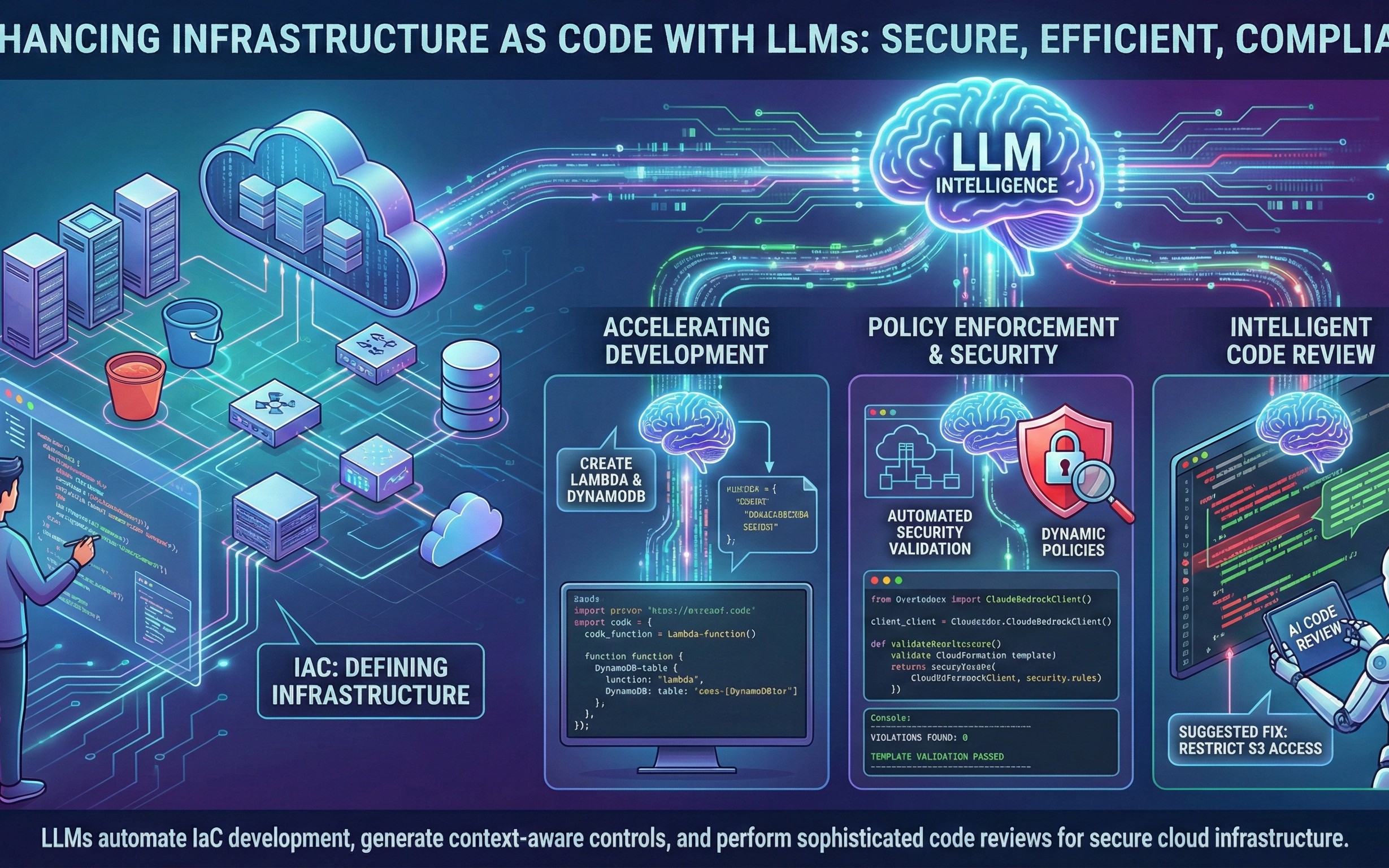

Enter LLMs. Claude, GPT-whatever. Big context windows gobble entire repos. ‘Reason’ step-by-step. Generate CDK from English blather: “Gimme a Lambda hitting DynamoDB off S3 events.”

Boom, code spits out. Neat, right?

By generating context-aware controls and performing sophisticated code reviews, LLMs significantly enhance our ability to build secure and efficient cloud infrastructure.

That’s the hype line straight from the original pitch. Sounds authoritative. But let’s poke it.

LLMs accelerating IaC dev? Yeah, they autocomplete boilerplate. Describe your stack in prose, get CloudFormation JSON. Saves keystrokes. Helps noobs avoid basic goofs.

Example they tossed: plain English to CDK TypeScript. Table, Lambda, grants, event source. Clean enough. But what if your ‘requirements’ gloss over encryption? LLM parrots back, no questions asked.

Can LLMs Really Enforce Policies Without Hallucinating?

Policy gen’s the sexy bit. Intent-driven: “Block public S3. Enforce TLS everywhere.” LLM crafts checks, validates templates.

Proactive, they say. Ditch reactive scans post-deploy.

Here’s my unique gut punch, absent from the fluff: this echoes the NoOps dream circa 2012. Remember when Chef and Puppet promised self-healing infra? We got outages galore because ‘automation’ hid human slop. LLMs? Same trap. They’ll hallucinate secure-looking policies that crumble under audit. Bold prediction: first big IaC+LLM breach hits by 2025, courtesy of over-trusting AI reviews. Who’s liable? The vendor peddling the model, or your CISO?

Teams need hybrid smarts. LLM suggests, human approves. Integrate with OPA or Sentinel for hard gates. Don’t let Claude play god.

But costs. Token burns for repo-scale reviews. Fine for FAANG, killer for startups.

Skeptical vet mode: PR spin screams ‘monetize LLMs in DevOps.’ Pulumi, HashiCorp eyeing this. Who profits? Cloud giants billing more compute for AI infra ops.

Devs get faster? Maybe. Secure? Jury’s out.

Does This Fix IaC’s Real Pain Points?

Steep curve persists. Terraform HCL? Warlock incantations. LLMs lower the bar – natural language to code. Onboard juniors quicker.

Ongoing maintenance? Templates drift. LLMs audit diffs, flag drifts. Smart.

Yet complexity lurks. Multi-cloud? LLM bakes in AWS bias. Vendor lock via hallucinated best practices.

Historical parallel: early GitHub Copilot. Hype exploded, then license leaks and vulns. IaC+LLMs? Insecure scaffolding waiting to topple.

Bottom line – or close enough. LLMs juice IaC, but treat ‘em like power tools, not crutches. Enforce human-in-loop. Question the savings when breaches cost millions.

We’ve been here before. Valley loves shiny wrappers on old problems. Cash in while it lasts.

🧬 Related Insights

- Read more: Nine Vulnerabilities Expose IP KVMs as the Skeleton Key to Your Entire Network

- Read more: Why Your Free Website Monitoring Is Actually Costing You Thousands

Frequently Asked Questions

What does Infrastructure as Code with LLMs mean?

IaC lets you script cloud resources like software; LLMs generate, review, and secure that code using AI smarts.

Are LLMs safe for IaC security reviews?

They catch basics but hallucinate edge cases – always pair with static analyzers and human eyes.

Will LLMs replace Terraform experts?

Nah, they speed up grunt work but can’t handle custom enterprise policies without tuning.