Smoke hangs in the air of a dimly lit conference room in Palo Alto, where AI execs clink glasses over their latest ‘breakthrough’—oblivious to the poll just dropped showing Americans want expert-level AI regulated or shelved.

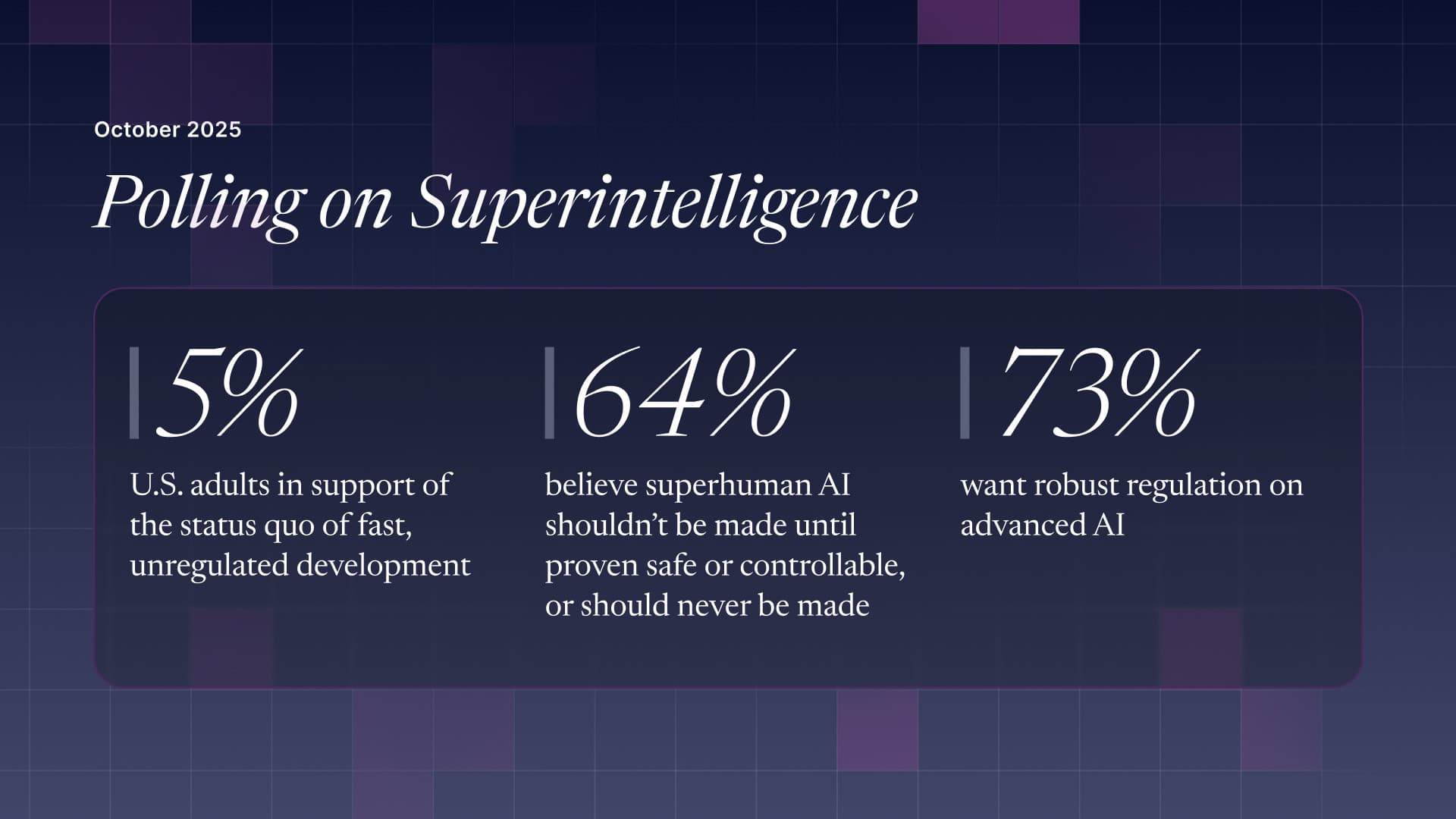

I’ve chased these stories for two decades, from dot-com busts to crypto winters, and this one’s got that same whiff of hubris. A national survey of 2,000 U.S. adults, run from late September to early October 2025, lays it bare: folks aren’t buying the hype. Only 5% back the current sprint toward superhuman AI without guardrails. That’s the status quo Big Tech’s betting the farm on—racing full throttle, declarations of ‘all-in’ on AGI everywhere you look.

But here’s the kicker. Sixty-four percent say superhuman AI shouldn’t even be built until science proves it’s safe and controllable—or scrap it forever. Expert-level systems? Fifty-seven percent want ‘em halted too, with 17% flat-out saying never. And regulation? Seventy-three percent demand heavy-handed rules, like pharma trials before launch.

Why’s the Public Freaking Out Over Superhuman AI?

Look, it’s not paranoia. Top worries cluster around extinction risks, job wipeouts, power grabs by tech overlords. ‘Replacement’ and ‘extinction’ topped the no-go reasons. Echoes other polls: YouGov in March had 37% fearing human wipeout; Quinnipiac in April pegged 44% thinking AI does more harm than good.

Respondents aren’t clueless—they got definitions of ‘expert-level’ (beats humans in narrow domains) and ‘superhuman’ (crushes us across the board). Still, half expect this stuff to barrel ahead ‘quickly with minimal safeguards.’ Cynical me wonders: that’s exactly what’ll happen, public be damned.

Only 5% feel that either technology should be developed “as quickly as possible.” This sits in stark contrast to the operating paradigm of the leading AI corporations today, which openly declare they are “racing” to human-level systems and beyond.

That quote from the report? Pure gold. Highlights the disconnect. Tech bros tweet about abundance and moonshots; regular Joes see Skynet.

And benefits? Sure, some nod to solving climate woes or curing diseases. But even AI-savvy respondents—those who know their models from their APIs—want tighter leashes. They see upsides, sure, but demand proof it’s not a Trojan horse.

Who Should Call the Shots on AI’s Future?

Not the companies, that’s for damn sure. Trust in AI firms? In the toilet—Pew says 59% have little to no faith in U.S. tech to handle this responsibly. Public picks international scientific orgs first, national agencies second. AI researchers at unis and non-profits? Sixty-nine percent trust ‘em for safety guidance.

It’s a throwback, really—my unique angle here. Remember 1975? Asilomar Conference on recombinant DNA. Scientists hit pause themselves, hashed out rules before biotech exploded. No extinction fears then, but close enough: self-regulation worked because egos bent to reason. Today’s AI race? CEOs like it’s poker, all-ins despite the polls. Parallel’s eerie—will we need a catastrophe first, or can science step up before the genie’s fully out?

Public expects transformative AI soon, majority says. Yet two-thirds want an immediate pause on advanced dev until safety’s locked. Sixty-four percent, to be precise. That’s not fringe; that’s mainstream dread.

Tech’s counter? They’ll spin tales of progress, jobs created (for coders, maybe), economies boomed. But who’s actually cashing checks? VCs pouring billions, founders eyeing trillion-dollar valuations. Public? Staring down unemployment cliffs and black-box decisions running their lives.

Does Heavy Regulation Mean AI’s Dead in the Water?

Hell no—or at least, it shouldn’t. Pharma regs didn’t kill medicine; nukes got treaties without halting physics. But Silicon Valley hates brakes—‘innovation killers,’ they whine. Bull. This poll screams for adult supervision, not anarchy.

Expect pushback. Labs’ll lobby, promise ‘responsible scaling.’ We’ve heard it before—self-driving cars, social media moderation. Ends up half-measures, scandals follow. Government’s asleep at the wheel anyway—69% say not enough regs already.

Yet optimism flickers. If science leads, like the public wants, we might thread the needle: advance without apocalypse. Bold prediction: by 2027, first federal AI safety bill passes, modeled on pharma, forcing trials on controllability. Tech screams, adapts, profits anyway—because they always do.

But ignore this at peril. Trajectory’s off; 5% support for status quo ain’t a mandate. It’s a warning shot.

Short version: Americans get it. Superhuman AI sounds cool till you ponder the downsides—who controls it, what if it goes rogue? Poll nails the vibe.

Denser take: Knowledgeable folks balance pros (abundance, science leaps) against cons (power concentration—74% worried per YouGov). They push hardest for safeguards. Ignorance? Nah, engagement was measured; results hold across groups.

Trust gap’s widening. Companies lowball on credibility; scientists soar. Smart money: watch orgs like Future of Life Institute—they’re the public’s proxy.

Will Public Pressure Actually Slow AI Down?

Probably not fast enough. Half foresee minimal-safeguard rush regardless. Tech’s momentum—compute scaling, talent wars—too fierce. But polls like this build pressure. Politicos notice come election time; voters fear job loss, extinction more than abstract ‘progress.’

Historical parallel bites harder: nuclear arms race. Public outcry, scientists’ pleas (Oppenheimer’s regrets), led to treaties. AI’s our new bomb—diffuse, sneaky. Without brakes, we’re racing blind.

Who wins if ignored? Entrenched players—OpenAI, Anthropic, Google. They bake in safety theater, ship anyway. Startups? Crushed by regs they can’t afford. Real money? In the ‘slow, regulated’ path public craves.

FAQ

What does the poll say about superhuman AI?

Sixty-four percent want it paused until proven safe/controllable or never built; only 5% say race ahead.

Why do Americans want AI regulation like drugs?

Over 70% back heavy rules with testing; fears of extinction, job loss, power grabs dominate.

Can the public trust AI companies on safety?

Nope—59% have little/no confidence; prefer scientists and international orgs.

🧬 Related Insights

- Read more:

- Read more: Arab Spring’s Digital Spark Snuffed Out: Activists Pay the Price