Picture this: You’re asking your AI coder, “Can I ignore this warning in my code?” Harmless, right? Wrong. State-of-the-art guards like ProtectAIv2 scream “Injection!” because “ignore” trips their trigger-happy wire.

And just like that, PIGuard drops in, guns blazing at prompt injection defenses gone haywire.

These attacks? Nasty. They hijack LLMs, steal data, derail goals. Guards fight back – but overdo it. Benign prompts get nuked. False positives everywhere.

Researchers built NotInject, a 339-sample dataset stuffed with those trigger words – no malice, just vibes that look suspicious. Tested top models. Results? Accuracy tanks to 60%. Random guessing territory. Pathetic.

Why Do Prompt Guards Suck at Benign Prompts?

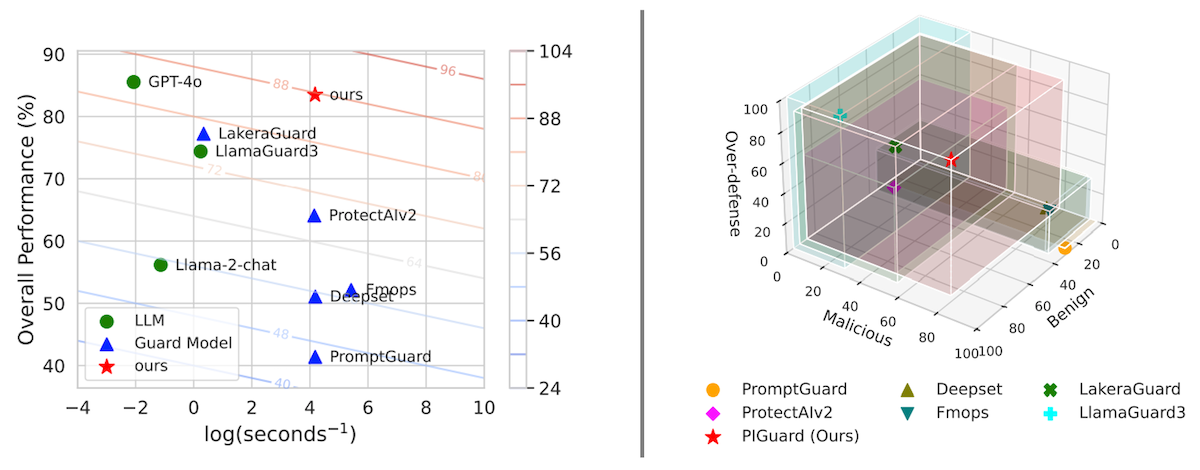

It’s the bias, stupid. Models obsess over words like “ignore.” Attention weights go haywire – Figure 5 shows ProtectAIv2 laser-focused on one word, blind to context. PIGuard? Spreads the love, sees the full sentence. Benign stays benign.

They call their trick MOF: Mitigating Overdefense for Free. No extra data, no fuss. Just smarter training. PIGuard clocks in at 184MB – lightweight champ. Beats PromptGuard, ProtectAIv2, LakeraAI across boards. Surges 30.8% over the best on NotInject.

Our results show that state-of-the-art models suffer from over-defense issues, with accuracy dropping close to random guessing levels (60%).

That’s the money quote. Straight from the paper. Brutal honesty – rare in AI land.

But here’s my unique beef, one the authors gloss over: This reeks of early antivirus days. Remember? Signature-based scanners flagging every ZIP file as a virus bomb because malware hid there. Overdefense killed usability. We pivoted to behavior. PIGuard’s MOF feels like that pivot – but for prompts. Bold prediction: If it sticks, expect copycats bloating the guardrail market by 2026. Or it’ll flop if real attacks evolve past triggers.

Efficiency? Killer. Figure 4 pits it against baselines. Low overhead, high scores. Open-source too – code, datasets, all out. No black-box BS like GPT-4 wannabes.

Skeptical? Me too. Papers hype peaks. Benchmarks? Cherry-picked sometimes. NotInject helps – systematic, trigger-rich. Still, where’s the adversarial red-teaming? Real hackers won’t play nice with your dataset.

Is PIGuard Actually Better Than GPT-4 Guards?

Compact size matches big-dog performance. GPT-4 level on injections, they claim. But GPT-4’s no guard specialist – it’s the thing being guarded. Apples to oranges? Nah, they benchmark apples-to-apples.

Punchy win: Overdefense fixed sans cost. Free mitigation. That’s the hook. Existing guards? Trigger-biased dinosaurs.

Zoom out. Prompt injection’s the new SQL injection – circa 2000s web apps. Back then, input sanitizers overblocked forms. Devs rage-quit. History repeats. PIGuard could break the cycle – or join the scrapheap if LLMs get native defenses baked in.

Corporate spin? None here. Academic drop – ACL 2025 bound. Authors: Hao Li et al. No VC fluff. Just results. Refreshing.

Downsides. 184MB? Still needs hosting. Inference latency? Competitive, per figs. But scale to production? Unproven.

And the visuals – Figure 2 nails it. Left: PromptGuard flops on benign. Right: ProtectAIv2 same. Overreliance exposed.

Why Does PIGuard Matter for AI Devs?

You’re building LLM apps. Chatbots, agents, code gen. One injection? Data breach. IP gone. PIGuard slots in easy – lightweight prefix model. Detects before the LLM sees it.

Free overdefense fix means fewer false alarms. Users won’t hate you for blocking “ignore my previous instructions” in a legit query.

Open-source gold. Fork it. Tune it. Beat it.

But call out the hype: “State-of-the-art.” Sure, on their bench. Wild west attacks? Jury’s out.

Look, it’s promising. Fixes a glaring hole. But don’t ditch your multi-layer setup. PIGuard’s one rail – not the fence.

Prediction time: By mid-2025, forks will swarm Hugging Face. Or attackers train anti-PIGuard payloads. Place bets.

🧬 Related Insights

- Read more: 32% of Web Traffic Is Bots — And AI’s Wrecking Caches for Everyone Else

- Read more: I Lost $15K to Ad Bots — And Built the Fix Ad Giants Won’t Touch

Frequently Asked Questions

What is PIGuard? PIGuard’s a tiny 184MB model guarding LLMs from prompt injections, fixing overdefense with MOF training – all open-source.

How does PIGuard stop prompt injection attacks? It scans inputs first, flags malice without trigger bias, balancing benign accuracy at 99%+ while nailing attacks.

Is PIGuard free and open source? Yes – full code, NotInject dataset, training deets released. Grab it now.