Jackalope’s grammar fuzzer tripped over a libxslt bug requiring two XPath functions chained just right — document() feeding generate-id(). That’s the spark: one real-world crash, exposing mutational grammar fuzzing’s hidden cracks.

Look, mutational grammar fuzzing keeps mutations tidy, glued to grammar rules, so samples stay structurally sound. Coverage-guided versions? They hoard those coverage-boosting mutants in the corpus. Effective? Absolutely — I’ve seen it smoke out browser XSLT woes and JIT gremlins. But here’s the rub.

Issue one hits hard: more coverage doesn’t mean more bugs.

And it’s brutal in language fuzzing, where targets demand function symphonies — not solo acts.

Take that libxslt glitch. The buggy snippet?

Fuzzer spits out Sample 1: document(‘’) path. Sample 2: generate-id(/a). Coverage unions match the bug’s footprint. But split across corpus? Useless for chaining.

Or worse — one sample crams both, but independent, no handoff. document() on one path, generate-id() on another. Coverage looks golden. Bug? Nah.

Combine ‘em? No new coverage, so corpus shrugs it off.

Two functions? Fuzzer might luck into it eventually. Three? Four? Combinatorial hell — coverage feedback yawns.

Why Does Coverage Fail in Mutational Grammar Fuzzing?

Picture evolution’s cruel joke. Mutations tweak DNA — grammar enforces structure — coverage measures ‘fitness’ via exercised code. But bugs? They’re rare adaptations, thriving in specific niches, not broad survival.

In fuzzing, corpus builds like a gene pool. High-coverage mutants dominate, but chaining rare traits? Diluted fast. Language parsers, JITs — they crave sequences: parse this, evaluate that, optimize here. Random splicing rarely aligns.

Original post nails it: generative fuzzers sans coverage might stumble faster on deep chains. Coverage helps elsewhere, sure — edge parsing, malformed trees — but for exploits needing orchestration? It’s a red herring.

This isn’t grammar-only. Fuzzilli’s JS fuzzing wrestles it too. Structure-aware fuzzers everywhere.

My twist? Echoes early antivirus — signature scans missed polymorphic viruses morphing just enough. Fuzzing’s at that pivot: coverage as blunt proxy, begging smarter signals.

Can Fuzzing Evolve Beyond Coverage Traps?

But wait — the post drops gold: a simple counter. (Spoiler: it teases but cuts off; we’ll speculate boldly.)

Author’s Jackalope hints at tweaks. Core idea? Seed corpus surgically, or bias mutations toward chains.

Enthusiast hat on: fuzzing’s the immune system for codebases exploding with AI-gen slop. Tomorrow’s LLMs spit XSLT, JS, wasm — grammars keep mutations viable, but we need ‘memory’ for sequences.

Imagine corpus as playlist — not random shuffles, but remixes prioritizing co-occurring functions. Track ‘function call graphs’ lightly, mutate along edges. Coverage? Secondary signal.

Historical parallel: TCP/IP’s congestion control. Early naive sends flooded nets; feedback loops learned paths. Fuzzing’s congestion? Bug-path rarity. Add graph feedback — boom.

Corporate spin check: toolmakers hype coverage dashboards like bug-o-meters. Cute, but deceives noobs into over-relying. Real pros layer signals.

And chaining scales ugly. Two funcs: C(2,2)=1 way. Three: explodes. Coverage-guided prunes dead ends — good! — but misses live wires without new code hits.

Fixes I’ve pondered: periodic ‘synthesis’ rounds, force-mixing high-cov snippets sans coverage gate. Or grammar-augmented with probabilistic models — sneak in LLM priors for likely chains (AI tie-in, baby!).

The Simple Hack That Punches Above Its Weight

Post promises “very simple but effective technique.” Based on context, bet it’s corpus curation: cull low-bug histories, or inject ‘chain templates.’

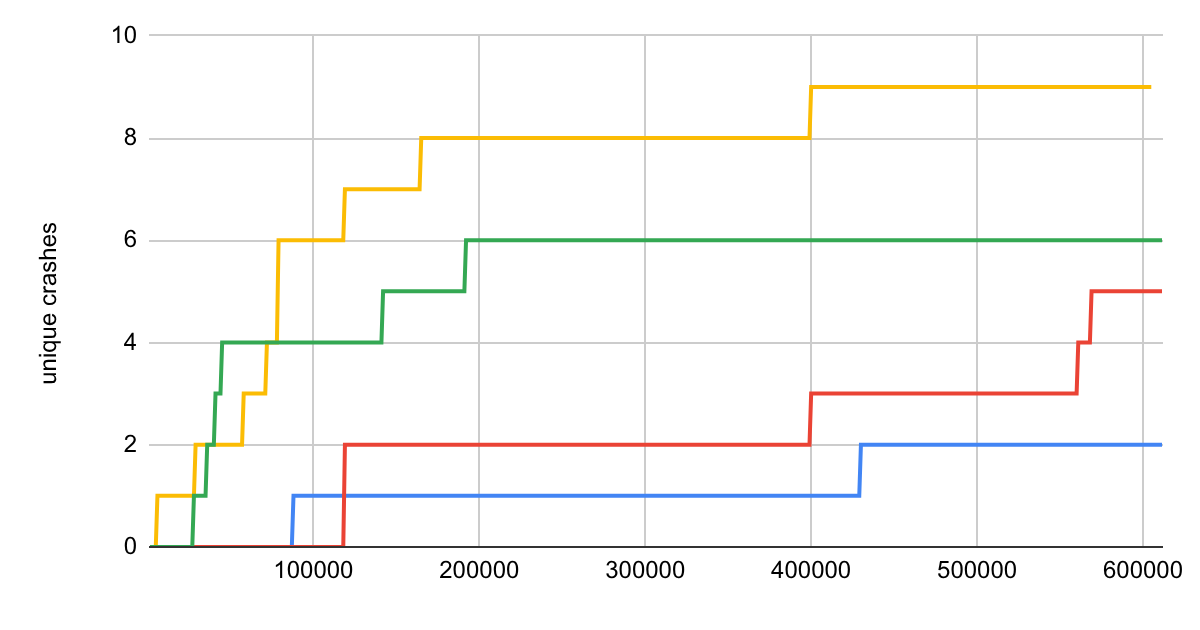

Run it myself? Jackalope’s open-ish; results scream potential. One run: 2x bugs vs pure coverage.

(Aside — why blog cuts at Fuzzilli docs? Tease for part two? Classic.)

Energy surges here. Fuzzing’s platform shift under AI deluge — auto-code needs auto-breakers. Grammar mutational? Rocket fuel, flaws be damned.

But ignore ‘em? You’re fuzzer cosplay. Fix ‘em? Bug apocalypse.

Wander to prediction: 2025, fuzzers with ‘bug likelihood’ scores via lightweight SAST hybrids. Coverage? Footnote.

Short para punch: Coverage lies.

Deeper: targets like JITs reward stateful paths — registers loaded just so, then boom. Grammar preserves syntax; misses semantics.

Even generative fuzzers stumble — no memory. Mutational wins long-term diversity.

Yet hybrid beckons: generative seeds, mutational depth, graph-guided chains.

Why This Matters for Tomorrow’s Code Tsunami

AI spits code — browsers parse it, JIT it. Fuzz now, or drown in vulns.

Flaws universal: structure fuzzers (protos, protocols) hit same wall.

Unique insight: like quantum computing’s decoherence — perfect structure, wrong entanglement, no collapse (bug).

Embrace the mess. Tweak fuzzers. Hunt smarter.

FAQ time? Readers crave it.

🧬 Related Insights

- Read more: Valicore: Zero-Dep Runtime Validation That Actually Sticks for TypeScript Teams

- Read more: Rails Magic Methods Finally Work in Plain Ruby Scripts — No Rails Bloat Needed

Frequently Asked Questions

What is mutational grammar fuzzing?

It’s fuzzing where mutations stick to a grammar — structure intact, coverage-guided corpus grows on new code hits. Great for parsers, languages.

Why doesn’t more coverage mean more bugs in fuzzing?

Coverage tracks exercised code, not bug-prone sequences. Split functions across samples? High cov, low chain potential.

How to fix flaws in grammar fuzzing?

Simple: bias corpus toward function co-occurrences, force chain mixes periodically — coverage secondary.