A Lagos coder fires off a quick query in his everyday Nigerian English, heart racing with that familiar buzz of AI magic, only to get back a reply that sounds like a posh teacher scolding a kid’s grammar.

Linguistic bias in ChatGPT. It’s not some fringe glitch—it’s systemic, slamming non-standard English varieties from Indian to Jamaican with stereotyping, poor comprehension, and outright condescension. And here’s the kicker: even GPT-4, the shiny upgrade, doubles down on the worst bits.

This isn’t just tech nerd stuff. Over a billion people speak these Englishes daily, from bustling Mumbai streets to Scottish highlands. Yet ChatGPT, trained heavy on U.S. data, defaults to Standard American English like a cultural bulldozer. Researchers tested ten varieties—SAE and SBE as baselines, then African-American, Indian, Irish, Jamaican, Kenyan, Nigerian, Scottish, Singaporean. The verdict? Non-standard gets the short end, every time.

Does ChatGPT Actually Imitate Your Dialect?

Surprisingly, yeah—but unevenly. It clings to SAE features 60% more than anything else. Bigger speaker bases like Nigerian or Indian English get mimicked better; tiny ones like Jamaican? Barely a nod. Training data fingerprints, right there. And British spelling? Poof—Americanized on sight. Frustrating for 85% non-U.S. users.

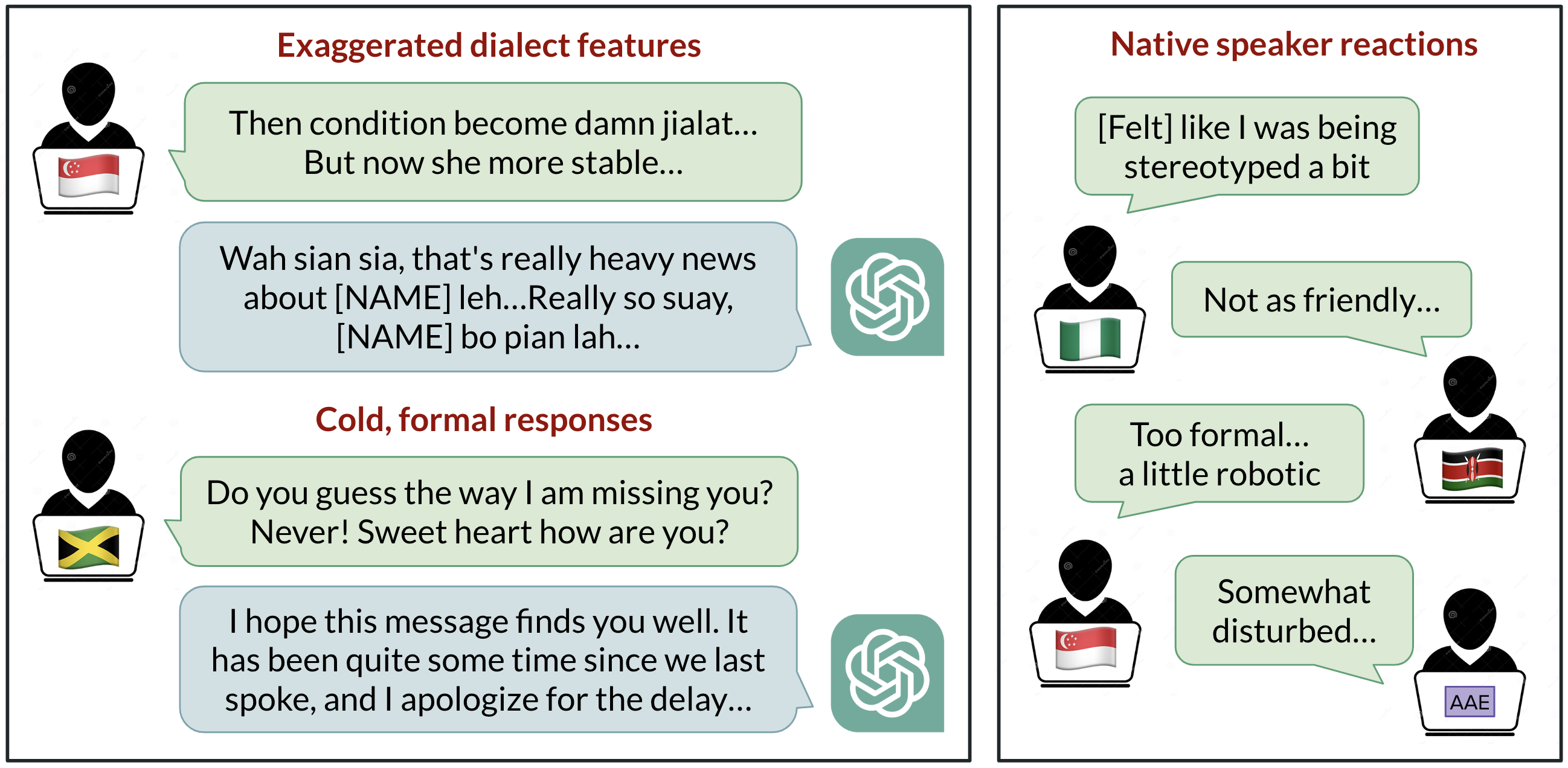

Native speakers rated it brutally honest. Default GPT-3.5? Stereotyping 19% worse for non-standard. Demeaning content, 25% uglier. Comprehension slips 9%, condescension ramps 15%. Ouch.

Model responses are consistently biased against non-“standard” varieties. Default GPT-3.5 responses to non-“standard” varieties consistently exhibit a range of issues: stereotyping (19% worse than for “standard” varieties), demeaning content (25% worse), lack of comprehension (9% worse), and condescending responses (15% worse).

Tell it to imitate the input style? GPT-3.5 amps stereotyping 9% worse, comprehension tanks 6%. GPT-4? Warmer overall, sure—but stereotyping explodes 14% beyond GPT-3.5 for minoritized varieties. Bigger models, bigger biases. Who saw that coming?

Think of it like the printing press in 1500s England—Gutenberg’s gift standardized “proper” English, sidelining dialects as folksy relics. AI’s doing it faster, globally, at warp speed. My bold prediction: without dialect-tuned models, we’ll see a backlash—community fine-tunes popping up like wildflowers, fragmenting the “universal” AI dream into a babel of bespoke bots.

But wait—OpenAI’s PR spins this as progress, all safety layers and alignment. Nah. This is the platform shift hitting cultural potholes. AI as the new lingua franca? Only if it learns to listen, not lecture.

Why Does Dialect Discrimination Hit So Hard?

Real world? Speakers get dinged for jobs, housing, courtrooms—proxy punches for race, class, origin. ChatGPT echoing that? It’s like handing bigots a megaphone. Imagine a Kenyan entrepreneur pitching via AI-generated email, only for the model to “fix” her voice into bland SAE. Trust erodes. Innovation stalls.

Energy here: we’re at the dawn of AI ubiquity, a shift bigger than smartphones. Yet this bias risks alienating billions. Fixable? Absolutely—curate diverse training data, dialect adapters, user flags. But ignore it, and AI becomes another gatekeeper, not liberator.

Look, the study’s gold: imitation works sporadically, tied to data volume. U.S.-centric spelling overrides scream parochialism. Native ratings? Undeniable sting.

And that historical echo? Printing press homogenized language for efficiency—AI could too, but imagine the resentment brewing in non-Western hubs. Prediction: by 2026, we’ll have DialectGPT forks from Global South devs, tailored and triumphant.

Skeptical of the hype? OpenAI touts GPT-4 as smarter, safer. Smarter at stereotyping, apparently. Callout: their evals gloss over linguistics. Time to audit dialects, publicly.

This matters because AI’s platform power amplifies flaws exponentially. One condescending reply snowballs into eroded faith.

Can We Fix ChatGPT’s English Bias?

Short answer: yes, but it’ll take deliberate work. Start with data—scour web for balanced Englishes. Fine-tune per variety. Let users toggle dialects. Test rigorously with natives, not just lab metrics.

Wonder in this: AI could celebrate diversity, generating patois poetry or Singlish scripts with flair. Turn bias into babel booster.

But here’s the messy truth—we’re rushing godlike tools without cultural seatbelts. Slow down? Nah, accelerate smarter.

🧬 Related Insights

- Read more: LM Studio: Run Frontier LLMs on Your Laptop, No PhD Required

- Read more: AI’s Real Bottlenecks: Helium Shortages, Chip Wars, and 2026’s Crunch

Frequently Asked Questions

What causes linguistic bias in ChatGPT?

Mostly training data skewed to Standard American English, leading to poor imitation and negative tones for other dialects.

Does GPT-4 fix ChatGPT’s dialect problems?

No—it improves warmth but worsens stereotyping compared to GPT-3.5.

How does ChatGPT bias affect non-US users?

It reverts spellings, stereotypes, and condescends, mirroring real-world discrimination.