44 wiki pages. From 50 raw files. That’s what happened when I fired up this Markdown-powered second brain, slashing token use by 90%—no vector databases, no RAG headaches.

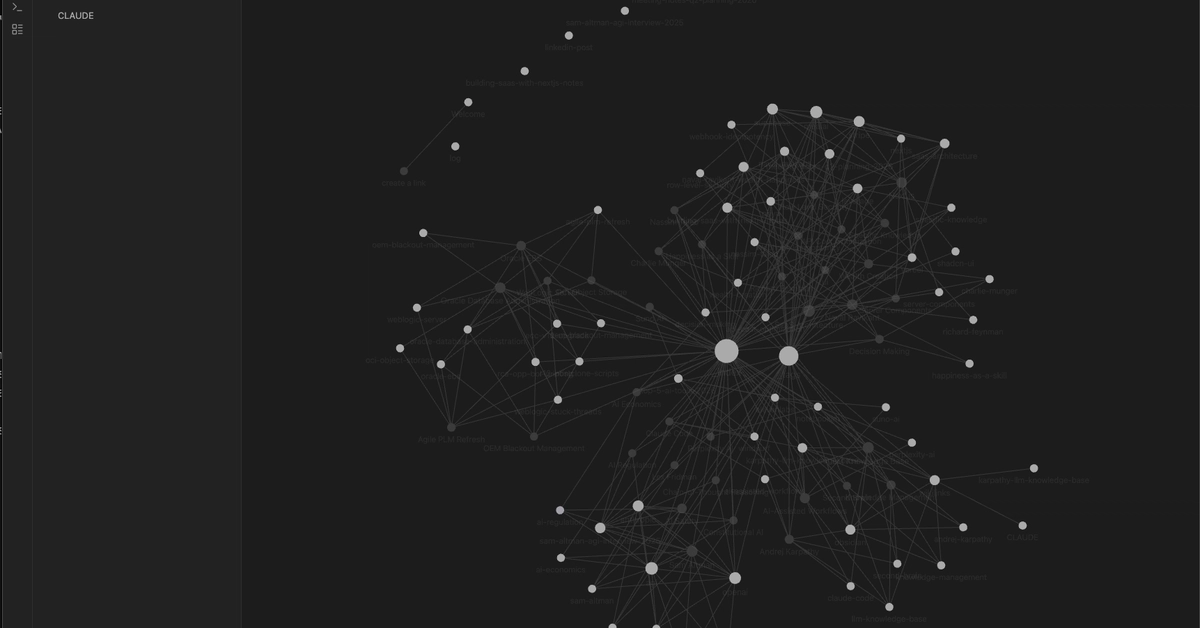

Andrej Karpathy dropped the spark: LLMs gobbling markdown files straight-up, spitting out organized wiki pages with backlinks. Obsidian lights it up in graph view. Mind. Blown.

But here’s the thing—I grabbed that idea and turbocharged it. Multi-format ingestion. Smart duplicates dodged. Auto-indexed master hub. Suddenly, your personal second brain isn’t just a folder; it’s a living, breathing knowledge galaxy, pulsing with connections only an AI could dream up.

Picture this: Transformers revolutionized NLP like the steam engine kicked off factories—raw power, scaled endlessly. Now, Claude does the same for your brain dump. Drop PDFs? Marker converts ‘em. YouTube rants? Transcripts auto-pull. Web scraps? Cleaned and ready. It’s ingestion on steroids.

Can Markdown Files Outsmart Vector Databases?

Look, vector DBs promise magic—embeddings, similarity hunts—but they’re brittle beasts. Chunks float in vector space, relationships guessed at best. This? LLM reads holistically, weaves actual [[backlinks]], tags that make sense. Token savings hit 90% because you’re querying a lean wiki, not bloated originals.

The transformer is a neural network architecture that relies entirely on self-attention mechanisms…

Key Concepts

- Self-Attention — see [[Self-Attention]]

- Multi-Head Attention — parallel attention layers

- Positional Encoding — since transformers have no recurrence

That’s a slice from my generated “Transformer Architecture” page. Frontmatter tracks sources, related_topics link siblings like [[BERT]] and [[GPT]]. Obsidian’s graph? Clusters emerge—deep learning hubs, NLP constellations. It’s not search; it’s navigation through ideas that feel human-curated.

Duplicate detection saves the day. Before Claude crafts a new page, it scans the wiki: overlap? Merge smoothly. No silos, no cruft. The _Index.md? Auto-refreshed bible—categorized links, timestamps, snippets. “Last updated: yesterday on quantum entanglement.” Boom, context at a glance.

Setup’s dead simple. Three folders: knowledge-base/raw, wiki, .claude/commands. Drop files in raw/articles or raw/pdfs. Fire Claude Code CLI: “Ingest all in raw/, build wiki pages.”

It scans changes. Extracts concepts, entities, relationships. Updates index. Done.

Obsidian opens the wiki folder—graph view explodes with meaning. Token efficiency? Feed raw files to GPT: wallet-draining. This way? Link-hop to details, LLM context tiny and precise.

Why Does This Matter for Your Daily Grind?

We’re in the platform shift—AI as your eternal memory layer. No more “ChatGPT forgot our last convo.” This persists across sessions, human-readable, portable. Export? Just Markdown. Share? Git repo away.

But my twist—and this is the insight Karpathy’s post misses—this echoes the wiki’s birth. Ward Cunningham’s original wiki: plain text files, hyperlinks by hand. Wikipedia scaled it globally. Now AI hyperlinks for you. Prediction: In 18 months, personal second brains like this underpin every AI agent. Decentralized, local-first, Big Tech’s cloud moats? Crumbling.

Corporate hype calls this “RAG 2.0.” Nah. It’s RAG killed. No pipelines, no infra tax. Claude organizes like a genius intern—free, tireless.

I ingested transcripts from Karpathy’s lectures, PDFs on attention papers, notes from xAI talks. Graph showed clusters: attention mechanisms linking to scaling laws, back to Grok’s architecture. Wonder hit: This isn’t storage; it’s augmentation. Your brain, but electric.

Energy here. Pace yourself through a day—query index for “LLM fine-tuning,” hop [[PEFT]], dive [[LoRA]]. Relationships surface serendipity: Oh, [[QLoRA]] ties back to quantization from a dusty PDF.

Short version? It’s addictive.

Scaling up. 50 files was the test; now 200+. Index handles it. Claude’s prompt (in ingest.md) is the wizard: “Extract key concepts… check duplicates… add frontmatter… update _Index.”

Tweak it. Add voice notes? Transcribe first. Podcasts? Fetch RSS, yank transcripts. The raw/ folder’s your black hole—suck in chaos, emit order.

Obsidian plugins amplify: Dataview queries frontmatter, Canvas for mindmaps. But core? Pure Markdown resilience.

Critique time—Claude Code’s Anthropic-only now. Open-source it? Swap for Ollama, local LLMs. Cost? Pennies per ingest versus Pinecone bills.

This isn’t toy. Knowledge workers—devs, researchers, writers—your edge is synthesized insight. Raw files bury it; this surfaces gold.

How Do You Build Your Own Second Brain Today?

mkdir knowledge-base/{raw,wiki}

Drop files. Claude: ingest. Obsidian: explore.

That’s it. No PhD required.

The wonder? AI’s strength—comprehension—meets files’ weakness—disorder. Result: Second brain that grows with you, not against you.

🧬 Related Insights

- Read more: AI Coders That Skip Your Repo? They’re Just Glorified Suggestion Boxes

- Read more: SonarQube’s Community Edition: Free Lunch or Developer Trap?

Frequently Asked Questions

What is a personal second brain with Markdown and Claude?

It’s a folder system where Claude AI reads your raw docs (PDFs, transcripts, articles), generates linked wiki pages in Markdown, and builds an indexed knowledge graph viewable in Obsidian—no databases needed.

How to set up Claude Code for knowledge base ingestion?

Create knowledge-base/raw and /wiki folders, drop files in raw, run Claude CLI and prompt: “Ingest raw/ files into wiki/ with backlinks and index.” Use Obsidian for graphs.

Does this replace tools like Notion or Roam Research?

For AI-powered organization, yes—cheaper, local, token-efficient. Notion’s great for manual edits; this auto-organizes via LLM smarts.