That ominous blue swath across Anthropic’s labor market graph — it’s inescapable on X, LinkedIn, everywhere AI doomers and boosters clash.

Claims LLMs could theoretically nail 80% of tasks in everything from arts to management. Shocking, right?

But hold on.

Anthropic’s report on AI’s theoretical capabilities in the job market doesn’t spring from their own Claude tests or forward projections. Nope. It’s recycled from an August 2023 paper — ‘GPTs are GPTs’ — by OpenAI folks, OpenResearch, and Penn researchers. Pre-Claude 3. Pre-GPT-4o. Hell, pre-half the benchmarks we obsess over now.

How Did Anthropic Measure ‘Theoretical Capabilities’?

They didn’t measure. They borrowed.

The original paper tasked GPT-4 (vanilla edition) with evaluating 1,016 tasks from the O*NET database — those granular job duties like ‘compile data’ or ‘draft contracts.’ For each, the model spat out a yes/no: can an LLM do this, theoretically, today or soon?

“We prompt GPT-4 to classify whether LLMs could perform each task, defining ‘perform’ as replicating human outputs with sufficient quality for real-world deployment.”

That’s the money quote. Sufficient quality. Real-world deployment. Bold words for a model that, back then, hallucinated footnotes and botched basic math half the time.

Anthropic just slapped those scores onto their chart as the ‘blue line’ — current observed exposure (red, from actual usage) versus this aspirational ceiling. No updates. No recalibration for 2024’s model leaps. It’s like citing a 2018 iPhone review to hype the iPhone 16.

And here’s the kicker — my unique angle: this mirrors the expert systems bubble of the 1980s. Back then, companies like DEC poured millions into rule-based AI promising to automate engineering diagnostics. Theoretical capabilities? Sky-high on paper. Real impact? Zilch, because integration into messy human workflows proved impossible without Japanese fifth-gen flops bankrupting the dream. LLMs face the same wall: not raw task competence, but architectural glue — APIs, legacy systems, human oversight loops. Anthropic’s blue blob ignores that entirely.

Why Does This ‘Theoretical’ Metric Miss the Real Job Fight?

Zoom out. Observed exposure — that red sliver — clocks in at 20-30% for most categories. Why the gap?

Tasks sound simple: ‘schedule appointments.’ But in practice? It’s entangled with HIPAA compliance, patient no-shows, EHR glitches. LLMs crush isolated demos; they crumble in the wild.

Anthropic admits as much, sorta. Their report nods to ‘automation potential’ needing economic viability, but the graph? It screams takeover. Corporate PR spin at its finest — visualize doom to fundraise on safety fears.

Look, readers like you get this. You’re not panic-scrolling plebs. The ‘how’ here is lazy aggregation: take one-shot GPT judgments, average across occupations, plot. No error bars. No human validation beyond spot-checks. The ‘why’? To frame Anthropic as the sober economist in a hype circus, even as Claude 3.5 Sonnet laps GPT-4 on every ARC benchmark.

Short para for punch: Outdated baselines breed false alarms.

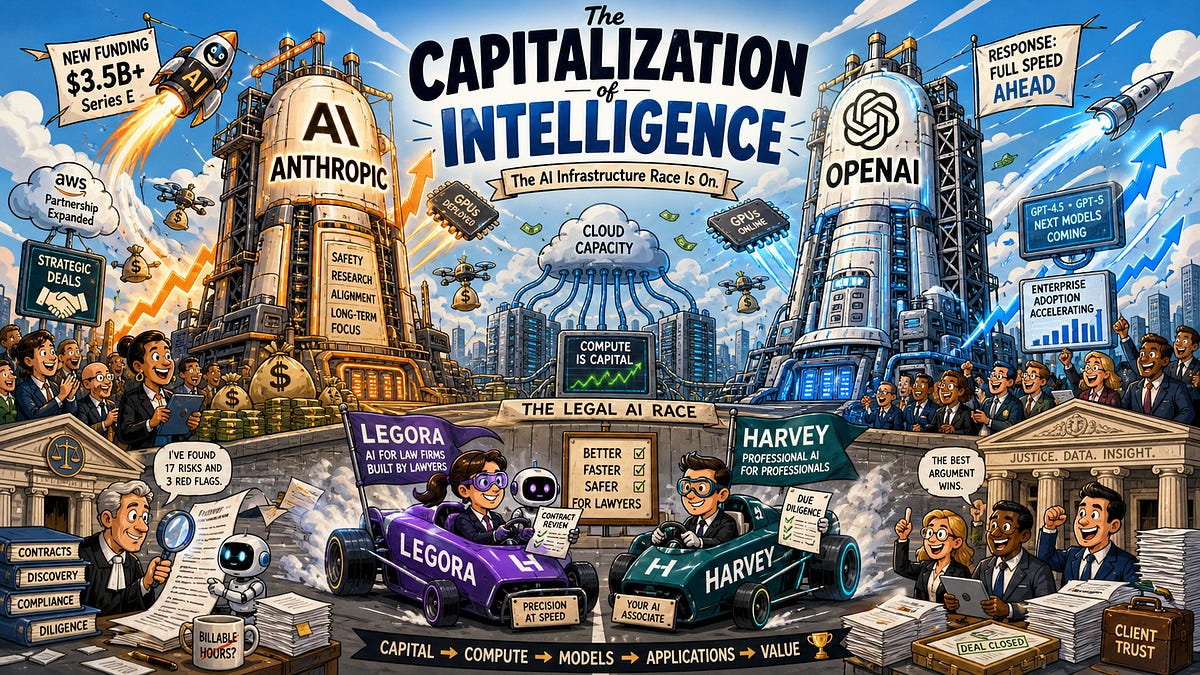

Now, drill deeper into categories. Legal? Blue line at 80%. Sure, Claude drafts NDAs decently — but arguing in court? Parsing novel case law under time pressure? That’s architecture, not autocomplete. Management? ‘Motivate teams.’ LLMs mimic pep talks; they don’t read rooms or build trust over years.

Is Anthropic’s Graph Fueling Unnecessary AI Job Panic?

Damn right it is.

We’ve seen this movie: 2016’s Frey/Osborne study pegged 47% of jobs automatable, sparking universal basic income TED talks. Actual decade? Zero apocalypse. Productivity ticked up sans mass layoffs, thanks to reskilling and task recombination.

Anthropic’s theoretical capabilities play the same script — but amplified by LLM hype cycles. Prediction: by 2027, we’ll laugh at this graph like we do Watson’s Jeopardy win promising doctor replacements. Real shift? Augmentation in narrow verticals (code review, content outlines), not wholesale swaps. Why? Cost curves. A $20/month Claude sub won’t displace a $60k admin until latency drops 10x and reliability hits 99.99%.

But — em-dash aside — Anthropic’s not wrong about exposure trends. Red line’s climbing; tools like Cursor.ai already nibble dev tasks. The sin? Equating theoretical peaks with imminent reality, sans the gritty ‘how’ of enterprise adoption.

One para wander: Imagine finance analysts. Blue says 85% tasks LLM-able. Draft reports? Yes. But stress-test models during black swans? Negotiate with regulators? That’s human architecture — intuition forged in crashes.

What Happens When We Update for 2024 Models?

Run the O*NET gauntlet on Claude 3.5 or o1-preview. Scores jump — maybe 90% theoretical. But deployment? Still bottlenecked by data silos, bias audits, union pushback.

Here’s the thing. Anthropic could’ve commissioned fresh evals. They chose stasis. Smells like positioning: ‘See, even theoretically, it’s manageable — fund us!’

Dense stretch: Critics like me probe deeper. Original paper’s methodology? Single-prompt brittleness (tweak wording, scores swing 15%). No multi-model ensemble. No cost-benefit calculus (a $0.01 task vs. $100 human hour). O*NET tasks? Static 2010s snapshot, ignoring gig-economy evolutions like no-code tools already automating ‘admin’ before LLMs arrived.

Punchy close to section: Theory’s cheap. Reality bites.

🧬 Related Insights

- Read more: Railway’s $100M Gambit: Custom Data Centers to Supercharge AI Devs

- Read more: Amazon Nova 2 Sonic: Instant AI Podcasts That Chat Like Humans

Frequently Asked Questions

How did Anthropic measure AI’s theoretical capabilities in the job market?

They didn’t — borrowed 2023 GPT-4 judgments on O*NET tasks, calling ‘sufficient quality for deployment’ without real-world tests.

Will AI really replace 80% of jobs based on Anthropic’s graph?

No. Theoretical max ignores integration costs, reliability gaps, and human factors; observed use stays under 30%.

What’s the difference between observed exposure and theoretical capability?

Observed: actual LLM use today (red). Theoretical: speculative ‘could do it soon’ (blue, outdated).