Harvey’s legal agents just learned to do their jobs far better. Actually, far better than most of us expected.

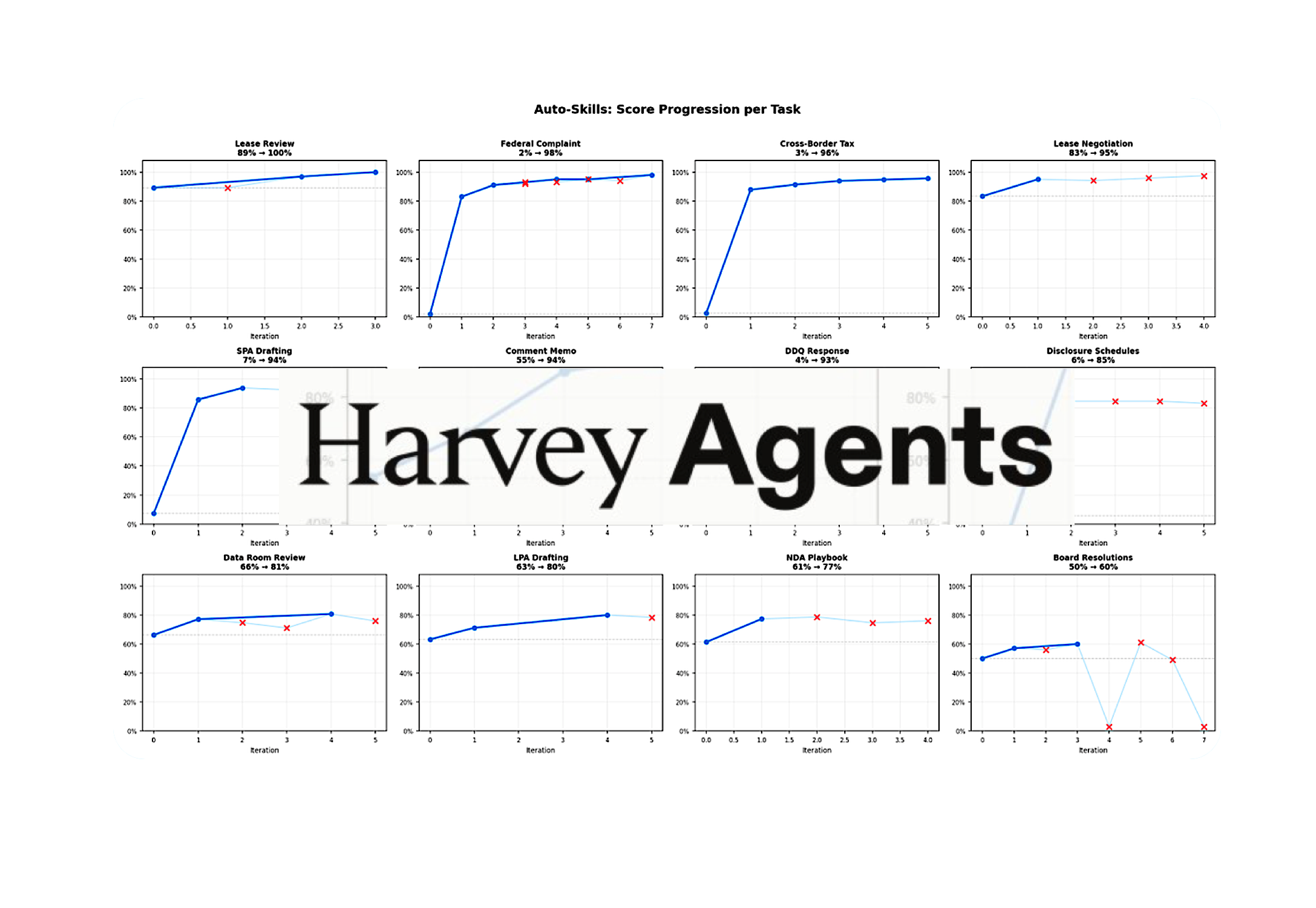

The company published a paper this week detailing an experiment in what it calls harness engineering—basically, building an educational infrastructure around AI agents so they can learn from their own mistakes. The results? Agent performance jumped from 40.8% to 87.7% across 12 complex legal tasks. Seven out of twelve finished above 90%. One hit 100%.

Before you dismiss this as typical tech hype, understand what’s actually happening here. This isn’t OpenAI’s ChatGPT answering your questions about contract law. This is an autonomous system learning to draft complaints, review commercial leases, prepare tax memos, and generate due-diligence responses—work that currently keeps junior associates and contract reviewers employed.

The Machine That Grades Itself

Here’s how the experiment actually worked, and why it matters more than you think.

Harvey set up a tight feedback loop. An agent attempts a legal task. An LLM judge scores it against a detailed rubric, identifying what the agent nailed, what it missed, and where its reasoning broke down. A coding agent then reads that feedback, clusters the failures, hypothesizes what improvements would help, and rebuilds the relevant components. Then it tries again.

Rinse. Repeat. Learn.

“Baseline agents with generic harnesses are not able to solve these legal-specific tasks well. Across the 12 tasks, five tasks started between 2-7% success rate. After optimization, the average score across all tasks moved from 40.8% to 87.7%. Every single task improved.”

That’s not incremental progress. That’s the difference between a system you wouldn’t trust with real work and one that’s actually operational.

What got built into these agents through that iterative loop? Cross-document review playbooks. Validation hooks that catch mistakes before the agent ships a deliverable. Structured fact sheets for drafting. File-conversion pipelines that spit out the exact format the client needs. These aren’t features some engineer coded by hand. The agent engineered them itself.

Why Most People Are Missing the Point

Here’s what you’ll hear from the skeptics, and they’re not entirely wrong: this was a small-scale experiment on 12 internal tasks. It won’t magically work on the entire universe of legal work.

Fair. Harvey even admits this.

But the core finding is harder to dismiss: given clear input/output examples and a decent grading rubric, you can automatically generate agent toolkits that actually work. When the rubric is high quality, the agent can improve dramatically.

That’s the shift nobody’s talking about enough. For twenty years, we’ve been told that AI in law means “better research tools” or “smarter contract analysis.” And those things matter. But they’re still assistance—a lawyer using a tool. What Harvey’s showing is something categorically different: agents that don’t just help. Agents that execute.

The Human Steering, Agent Executing Model

Here’s the part that separates this from the doomsday scenarios you’ve probably read.

Niko Grupen, Harvey’s Head of Applied Research, put it simply: “Humans steer. Agents execute.” A human lawyer (or senior associate) sets up the task, defines the rubric, establishes expectations, and presumably does final quality control. But inside that frame, the agent doesn’t need a lawyer watching its every keystroke. It learns. It improves. It operates.

That’s the difference between an AI assistant and real automation. And honestly, it’s the only model that scales without burning through your partner compensation budget.

The legal industry is drowning in complexity. Nearly every workflow—discovery, due diligence, contract review, memo drafting—involves judgment calls, edge cases, and enough variability that a simple rule-based system falls apart. If agents can actually learn from doing these tasks (not just from being fine-tuned once in a lab), then you’ve solved a genuinely hard problem.

So Who Actually Wins Here?

Let’s be direct: this is good news for Harvey’s customers, moderately good news for mid-market and BigLaw firms willing to invest in AI infrastructure, and potentially bad news for junior associates doing document review and first-draft memo work.

But here’s the thing nobody wants to say out loud. Law firm economics depend on those junior positions. They’re the training ground. They’re the profit machine. A 24-year-old first-year billing 300 hours of contract review is how partners get rich. If that work can be automated to 87% accuracy with human oversight, suddenly the economic foundation shifts.

That doesn’t mean the work disappears. It means law firms have to figure out something other firms might not: how to structure around fewer junior positions and different revenue models. Boutiques and solos will have an edge—they can scale without expanding headcount. BigLaw? That’s a structural problem.

Harvey isn’t the only player working on this. But they’re publishing results. They’re being honest about limitations. And they’re showing something that actually works at measurable scale.

What Comes Next

This is genuinely early stage. Harvey’s the first to be transparent about results like this. But others—Westlaw, LexisNexis, the startups nobody’s heard of yet—are building similar systems.

The real question isn’t whether legal agents will improve. They will. It’s whether the legal industry will actually deploy them in ways that change how work gets done. Plenty of firms will buy these tools and use them like Westlaw on steroids—better search, better drafting assistance, but still with the same underlying workflow.

The ones that win will be the ones that rebuild their workflows around what agents can actually do. That means changing how you price work. How you staff. How you think about quality control. And most fundamentally, what you ask humans to do.

Harvey’s experiment shows that’s possible. Whether law actually embraces it? That’s a different question.

🧬 Related Insights

- Read more: 83% of Americans Say Keep Humans in Charge: The Pro-Human AI Declaration That Spans Left and Right

- Read more: AI Act Governance: Commission’s Endless To-Do List

Frequently Asked Questions

What is harness engineering in legal AI? Harness engineering is building feedback loops and environmental structures around AI agents that let them learn from their own mistakes on specific tasks. Instead of updating the underlying model, you improve the agent’s “harness”—its tools, playbooks, validation checks, and execution environment—making it smarter without retraining.

Will Harvey’s legal agents replace junior lawyers? Not immediately, and probably not completely. But they will automate significant portions of document review, memo drafting, and contract work that junior associates currently do. Firms will need fewer people doing this work, though those who remain will likely supervise agents rather than do the work themselves.

Can these results work on other legal tasks outside Harvey’s 12 test cases? Maybe, but it’s not guaranteed. Harvey’s experiment worked because the tasks had clear inputs, outputs, and grading rubrics. Real-world legal work often involves judgment calls that are harder to codify. That said, if you can define clear success criteria, the harness engineering approach should theoretically apply to many legal workflows.