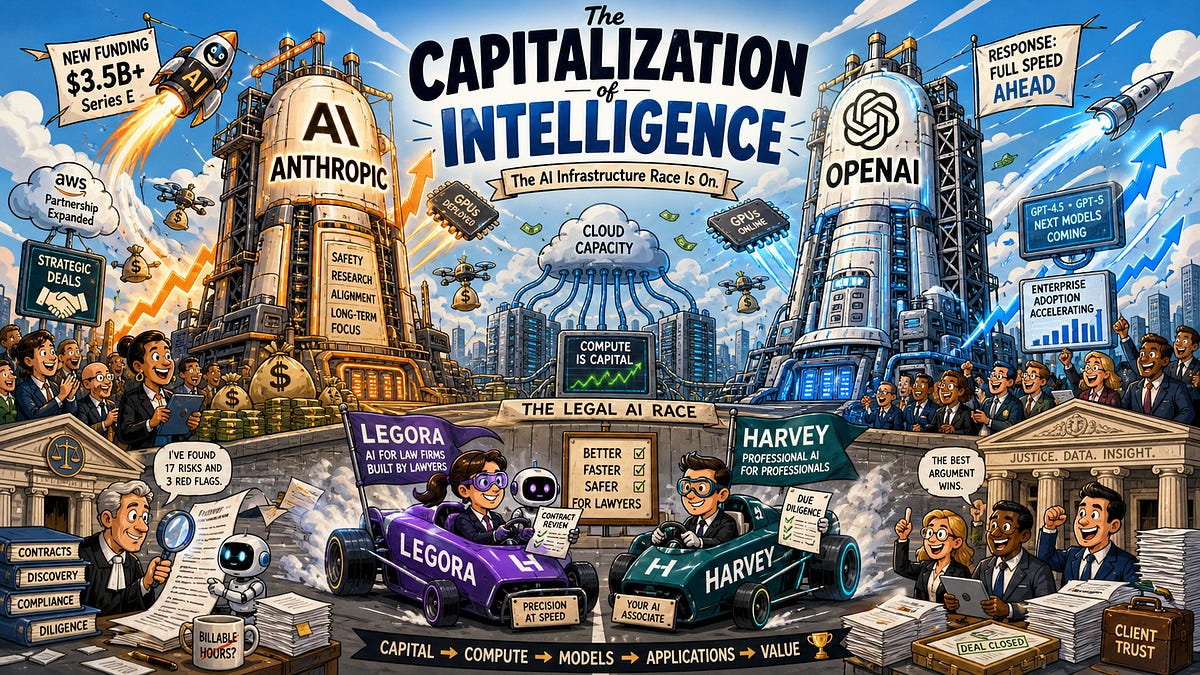

Imagine you’re a startup founder scraping by on venture scraps, training your first LLM, and suddenly AWS slashes prices on custom AI chips — because they’ve got gigawatts of capacity humming for Anthropic. That’s the ripple for real people: cheaper compute, quicker prototypes, less Nvidia lock-in.

AWS Anthropic Trainium expansion isn’t some abstract hyperscaler flex. It’s a direct shot at the wallet pains hitting developers everywhere.

Look, two years back, AWS looked like the dog lagging in the AI cloud race. Azure sprinting ahead on new revenue, Google closing the gap with TPUs. Investors dumped Amazon stock hardest among the big four. But now? Evidence mounts for a turnaround.

And here’s the kicker — Anthropic, that under-the-radar scaling beast pulling in $5B annualized run rate, is Amazon’s secret weapon. They’re inking multi-gigawatt deals, cranking out datacenters faster than ever. Satellite imagery doesn’t lie: over a gigawatt in late-stage builds, mostly for Dario Amodei’s crew.

Why Bet Big on Trainium Over Nvidia?

Trainium2 chips. World’s largest non-Nvidia cluster — nearly a million of ‘em in one campus. Sure, they trail Nvidia in raw specs, but peek under the hood.

Anthropic’s hooked on reinforcement learning roadmaps demanding sky-high memory bandwidth per dollar. Trainium nails that TCO sweet spot.

Anthropic multi-gigawatt clusters, Trainium ramp, best TCO per memory bandwidth, system-level roadmap, Bedrock and internal models.

That’s straight from the wires — no fluff. Dario’s team co-designed this beast, turning it into de facto custom silicon. Like Google DeepMind with TPUs, Anthropic gets that holy grail: tight hardware-software loop.

But — and this is my dig — AWS datacenters? Bland as yesterday’s blueprints. Air-cooled relics from five years ago. The magic’s inside, not in some liquid-cooled sci-fi shell.

Short version: AWS underperformed because EFA networking flopped for big clusters. InfiniBand eats its lunch. Multitenant GPU scene? CoreWeave, Azure crushing it with better software stacks.

Wholesale? OpenAI picks Microsoft, sure. But Anthropic? All-in on Amazon. That’s flipping the script.

How Trainium Reshapes the Power Hungry AI Wars

Power. The unspoken AI killer. Gigawatts don’t sprout overnight. AWS is sprinting — history’s fastest datacenter ramp-up.

Our proprietary models (think real-time satellite feeds) peg this as anchor-tenant driven. Anthropic’s scaling laws obsession demands it. Revenue quintupled YTD; they’re not slowing.

Here’s my unique take, absent from the chatter: this echoes AWS’s Nitro hypervisor pivot in 2017. Back then, custom silicon gutted VM sprawl, slashed costs 90%, crowned AWS cloud king. Trainium? Same playbook against Nvidia’s GPU moat. Bold prediction — by 2027, Trainium clusters hit 30% TCO edge for bandwidth-heavy workloads, pulling enterprises from Azure.

Skeptical? Fair. EFA still lags. Trainium2’s no Blackwell slayer yet. But co-design momentum? Anthropic’s whispering roadmap tweaks straight to AWS engineers.

And Bedrock? Internal models? That’s the longer game — AWS hosting Anthropic’s frontier stuff, undercutting rivals on price.

Is AWS’s Cloud Crisis Really Over?

Not entirely rosy. Markets still price in laggard status. Azure’s wholesale wins with OpenAI loom large. Google’s TPU surge narrows gaps.

Yet, ClusterMax ratings expose the crack: platinum players like Crusoe thrive on multitenant polish. AWS? Playing catch-up there.

But wholesale flips it. Anthropic’s outpacing OpenAI headlines, xAI hype. Fivefold growth? That’s oxygen for AWS’s 60% profit engine.

Wander a bit: remember EC2’s birth? Skeptics called it vaporware. Now? 33% market share. Trainium’s that bet squared for AI.

Developers win — lower bills. Enterprises? Predictable scaling sans Nvidia famines. End users? Smarter apps, sooner.

Corporate spin check: AWS won’t scream ‘resurgence’ yet. Subtle announcements, partner props. Smart — underpromise, overdeliver.

The Anthropic-AWS Symbiosis Deep Dive

Dario Amodei doesn’t grab splashy Sam Altman vibes. But scaling laws? Ruthless execution.

Gigawatt clusters mean exaflop-scale training. Trainium2’s memory bandwidth edge fits RLHF pipelines like a glove — cheaper iterations, faster Claude evolutions.

AWS gets sticky revenue. Anthropic gets priority iron. Win-win, until saturation.

Critique time: overreliance on one customer? Risky. But with Bedrock folding in, diversification brews.

Here’s the thing — this isn’t hype. Satellite-tracked shovels in dirt prove intent. Forecasts? 20%+ YoY AWS growth by ‘25 end.

🧬 Related Insights

- Read more: Google’s AI Overviews Pumps Out Millions of Lies Every Hour, New Tests Reveal

- Read more: Shanghai Man Wires $28K to an AI Ghost Girlfriend

Frequently Asked Questions

What is AWS Trainium2 and why does Anthropic love it?

Trainium2 is Amazon’s custom AI chip optimized for training massive models. Anthropic digs it for top memory bandwidth per dollar — perfect for their reinforcement learning push, slashing costs vs Nvidia.

Will AWS catch up to Azure in AI cloud dominance?

Possibly, via wholesale giants like Anthropic. Multitenant lags, but gigawatt Trainium clusters could flip TCO wars by 2026.

Does this mean cheaper AI for regular developers?

Yes — more capacity pressures prices down. Expect Trainium instances on Bedrock hitting market soon, undercutting GPU spot rates.

Word count: ~950.