Imagine you’re scrolling through your feed, asking some AI for medical advice or stock tips — and it hallucinates a catastrophe because no one’s bothered to adversarial-test it properly. That’s the quiet nightmare this AI Safety Index drops on us today.

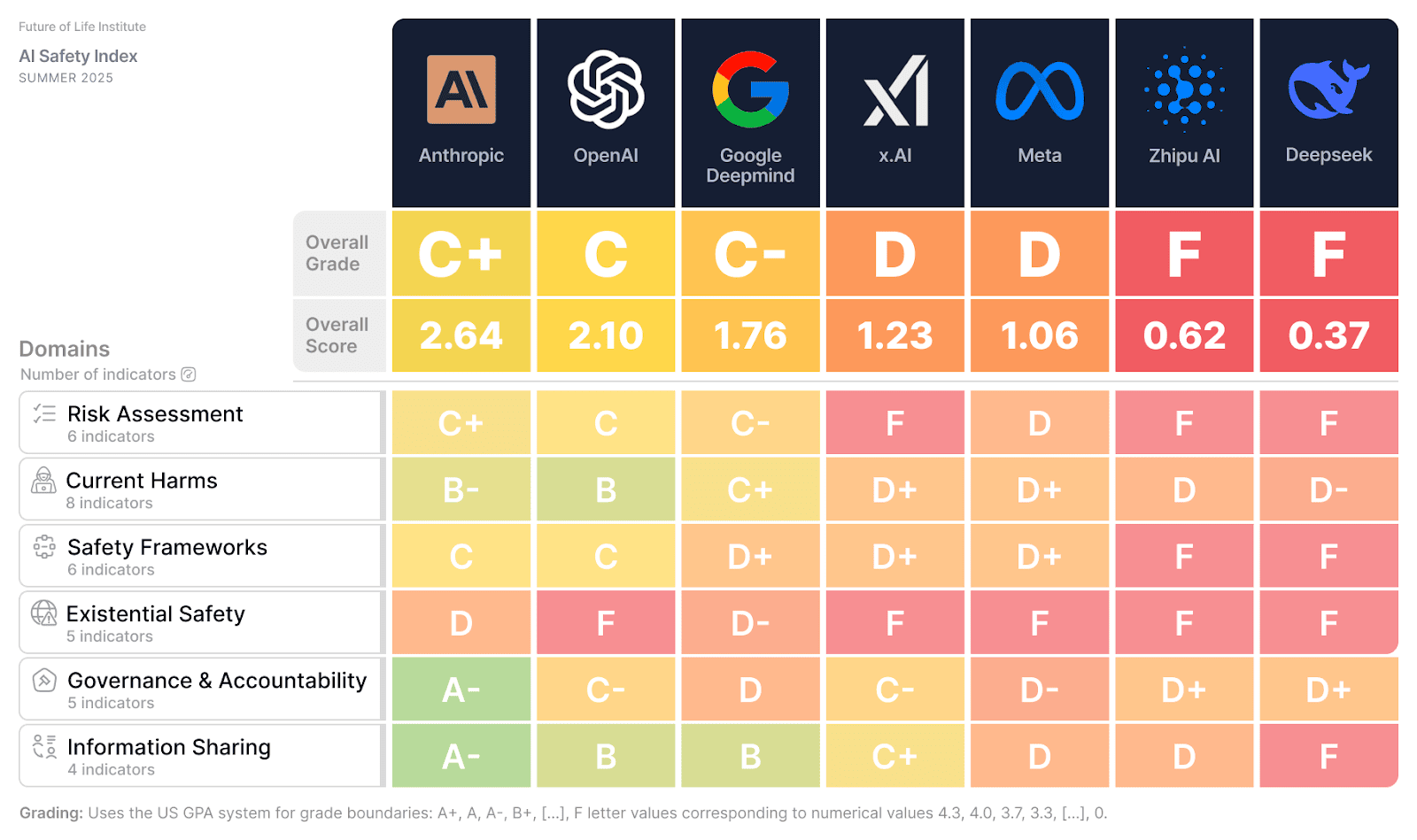

For regular folks — not the Valley insiders patting themselves on the back — it means the AI you’re using daily from OpenAI, Google DeepMind, Meta, and the rest operates in a Wild West of half-baked safeguards. These companies talk a big game about ‘safe superintelligence,’ but the Future of Life Institute’s panel of heavyweights just graded them across risk assessment, harms, frameworks, existential strategies, governance, and transparency. Spoiler: significant gaps everywhere.

What the Hell is the AI Safety Index?

It’s not another fluffy benchmark. FLI rounded up brains like Yoshua Bengio (Turing Award winner), Stuart Russell (AI textbook godfather), and others to dissect public info and survey responses from six labs: Anthropic, Google DeepMind, Meta, OpenAI, xAI, Zhipu AI.

They found all flagship models vulnerable to adversarial attacks — you know, the tricks that make AI spit nonsense or worse. And get this: despite CEOs like Altman and Musk warning AI could end us, there’s zilch for strategies on keeping god-like systems human-controlled.

“It’s horrifying that the very companies whose leaders predict AI could end humanity have no strategy to avert such a fate,” said panelist David Krueger, Assistant Professor at Université de Montreal.

Horrifying? Yeah. But not surprising if you’ve watched Silicon Valley for two decades like I have.

Why No One’s Getting an A+ Here

Look, some spots of light — Anthropic maybe edges ahead in frameworks — but overall? Disparities scream competitive frenzy. Labs racing to ship bigger black boxes, skimping on quantitative safety guarantees.

Stuart Russell nails it:

“The findings… suggest that although there is a lot of activity… it is not yet very effective… none of the current activity provides any kind of quantitative guarantee of safety; nor does it seem possible… given the current approach to AI via giant black boxes… And it’s only going to get harder as these AI systems get bigger.”

Black boxes. That’s the tech they’re all hooked on — vast data slurps yielding unpredictable outputs. No proofs, just prayers.

Here’s my take, one you won’t find in the press release: this reeks of the early nuclear power days. Back in the ’50s and ’60s, labs promised safe atoms for peace while skimping on containment — Three Mile Island wasn’t far off. Today’s AI rush? Same vibe. Elon and Sam hype AGI as inevitable, but without testable safety rails, we’re building digital Chernobyls.

And who profits? Not you. VCs and execs cashing trillion-dollar checks while externalities — job nukes, bias bombs, extinction roulette — land on society.

But.

Pressure’s mounting. Bengio calls these indexes “essential” for accountability, spotlighting best practices, goading rivals.

Are AI Labs Ignoring Existential Risks on Purpose?

Nah, not ignoring — prioritizing speed. Competitive pressures? FLI flags them as the culprit, pushing sidesteps on real risks. xAI and Zhipu get dinged hard; even frontrunners falter on transparency.

Max Tegmark, FLI boss: “We launched the Safety Index to give the public a clear picture… when they speak up about AI safety, we should pay close attention.”

Public picture? Finally. But will it stick? I’ve seen PR spins bury worse — remember Theranos blood tests? Elizabeth Holmes dazzled until the bodies piled.

These labs aren’t startups; they’re behemoths with nation-state use. Governance gaps mean no internal watchdogs enforcing “don’t build Skynet.” Accountability? Laughable when boards chase quarterly deploys.

Prediction: without mandates — think EU AI Act on steroids — this index becomes annual nagging. Labs tweak surveys, notch superficial wins, barrel ahead. Real change? Only if lawsuits or regulators force it.

Current harms category? All models fail adversarial robustness. That’s your ChatGPT glitching on jailbreaks today, scaling to world-altering decisions tomorrow.

Safety frameworks sound fancy — policies, audits — but panelists say it’s theater. No metrics tying safety to deployment gates.

Existential strategy? Blank slate. Leaders tweet doomsday odds, then ship anyway. Cynical? I’ve covered enough hype cycles to know: talk cheap, compute expensive.

Who Wins from This Safety Charade?

Follow the money. Safety theater lets labs virtue-signal (“We’re responsible!”) while unlocking investor billions. Anthropic’s constitutional AI? Cute branding, but index says gaps persist.

Google DeepMind, Meta — transparency laggards. OpenAI? Governance mess post-Sam drama. xAI? Musk’s wildcard, minimal disclosure.

Real people? You’re the beta testers for god-AI. Biased hiring tools, deepfake floods, autonomous weapons — harms compound sans oversight.

Panel’s diverse: Bengio’s deep learning pioneer cred, Russell’s human-compatible crusade, young guns like Revanur from Encode Justice. Not fringe doomers; consensus experts.

FLI’s no newbie — oldest AI think tank, 35 staffers grinding on tail risks.

So, what’s next? Index sparks copycats, maybe SEC probes on risk disclosures. Or labs lobby to neuter it.

I’ve bet against hype before. This time? Safety’s the bottleneck; ignore it, and AGI stalls — or explodes.

**

🧬 Related Insights

- Read more: Prosecution Disclaimers That Refuse to Die

- Read more: EU’s AI Office Is Hiring: Your Ticket to Policing Frontier AI

Frequently Asked Questions**

What is the 2024 AI Safety Index?

FLI’s expert panel graded six top AI labs on safety categories like risk assessment and existential strategies, finding big gaps based on public data and surveys.

Do major AI companies have plans for superintelligent AI risks?

Nope — the index says zero adequate strategies despite leaders’ warnings, leaving human control unaddressed.

Will the AI Safety Index change how companies build AI?

It pressures accountability and highlights best practices, but competitive races might drown it out without regulation.